I remember my facial expression when I took my first technical SEO course.

It was something like this.

I felt confused and somewhat demotivated.

I was too scared to take on clients.

I tried to read a lot about technical SEO issues, but finding a good resource was problematic.

And the tools for technical SEO? They were expensive.

So while brainstorming my first blog post for Hackernoon, I didn’t hesitate to choose this topic.

In this article, you will learn how to find and fix common technical SEO issues using Google Search Console. You will also learn, how to prioritize technical SEO fixes.

What are technical SEO issues?

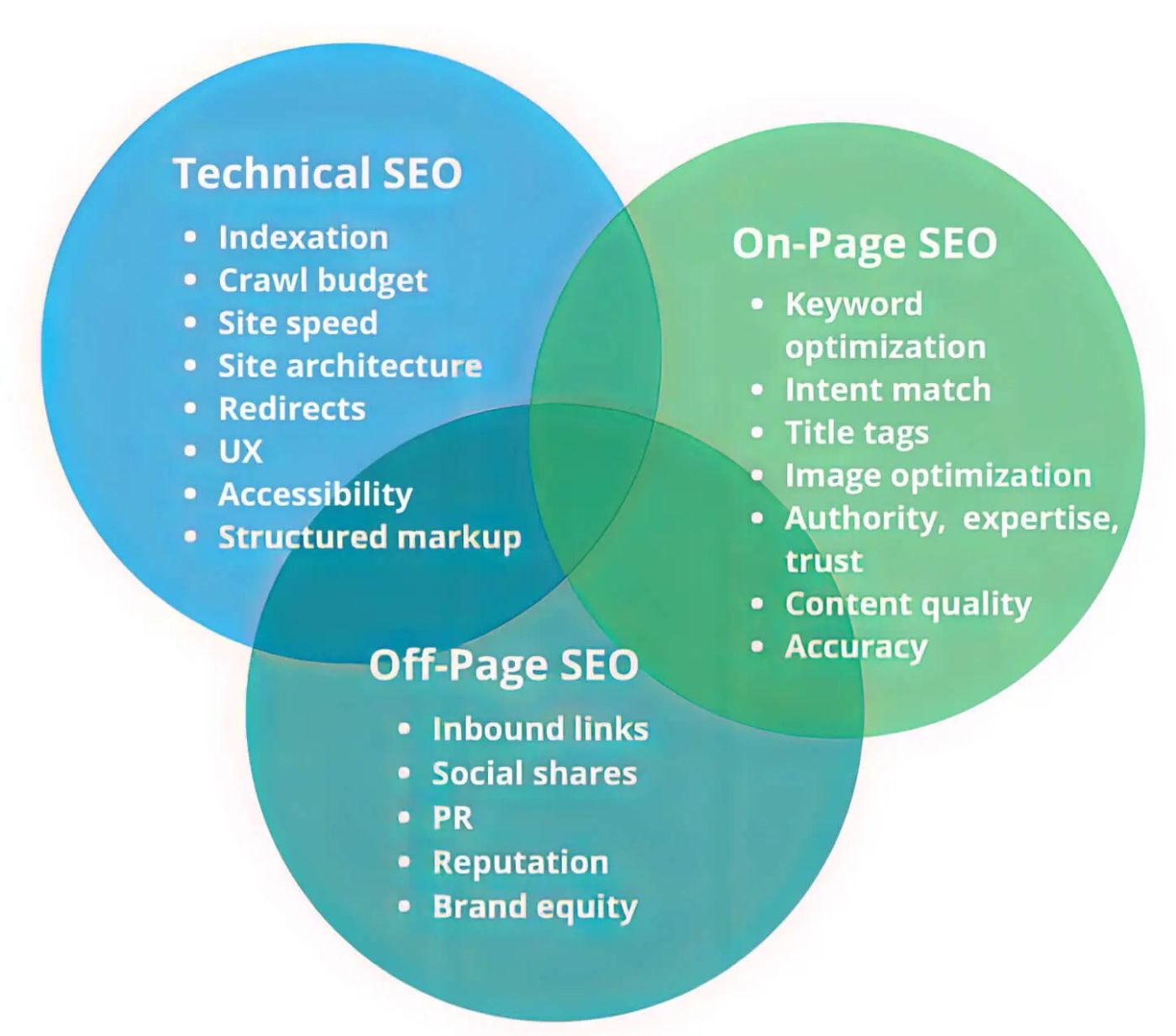

__Technical SEO__issues are problems related to the technical aspects of your website.

The technical aspects include your website's infrastructures, codes, and configuration. These problems affect how search engine visualizes and rank your site.

It is quite different from Organic and Local SEO. Which deals with on-page and off-page features and how they connect to your content

Here is a pictorial difference between on-page and off-page SEO

Examples of technical SEO issues include page speed errors, indexation issues, crawlability issues, etc

Why is it important to fix technical SEO issues?

Technical SEO issues can hinder search engines from properly crawling, indexing, and understanding your website.

Consequently, it can lead to lessers, leads, conversions, and sales.

Technical SEO issues such as slow page load times, broken links, or mobile incompatibility can affect your user experience negatively.

This can frustrate your visitors and drive them away from your site.

It's easy to overlook technical SEO issues, and they can significantly negatively impact your site's rankings.

Below are common technical SEO issues, and how to find and fix them.

Common technical issues and how to fix them

1. Problem with indexation

Indexation is one of the most common technical SEO issues I have encountered since I started my journey. When search engines like Google visit your website,they analyze your content and store it in their index to provide relevant search results to your users.

Indexation issues are problems that prevent search engines from properly crawling and indexing web pages.

Three requirements must be met for your web page to be considered indexable:

Crawlability

Your webpage should be accessible for search engine crawlers to explore and analyze its content.

Crawability means you grant, search engines(Googlebot, Bing, etc) a gatepass to view and understand the content on your web page.

If you haven't blocked Googlebot from accessing the page through the robots.txt file, you don’t have a crawlability issue.

Noindex Tag

Your page should not have a "noindex" tag. The "noindex" tag is a directive that tells search engines not to include the page in their indexes. If your page has a "noindex" tag, search engines will not display it in the search results.

Canonicalization

The third requirement is that, in the case of duplicate content, your page should have a canonical tag indicating the main version of the content.

Canonicalization is used to address duplicate content issues when multiple versions of the same content exist.

So, when you specify a canonical URL, you indicate which version should be considered the primary page.

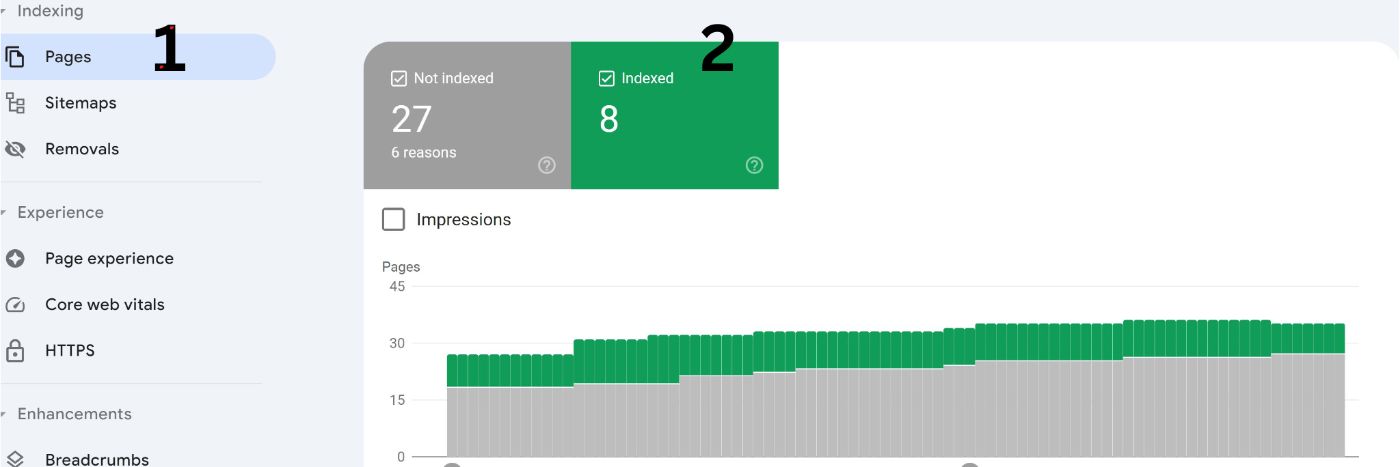

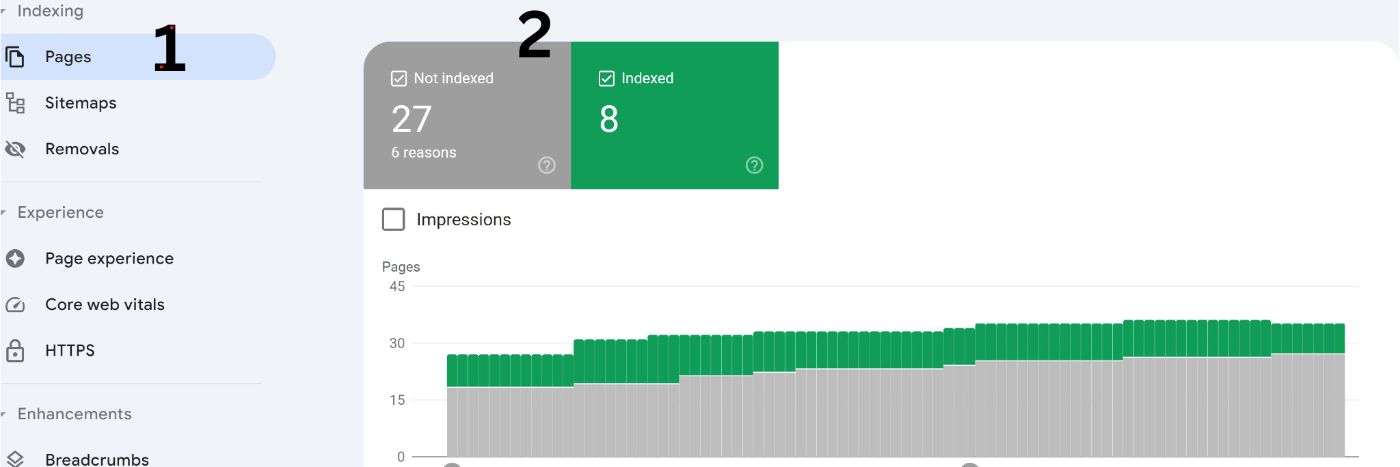

How to find and fix indexation issues

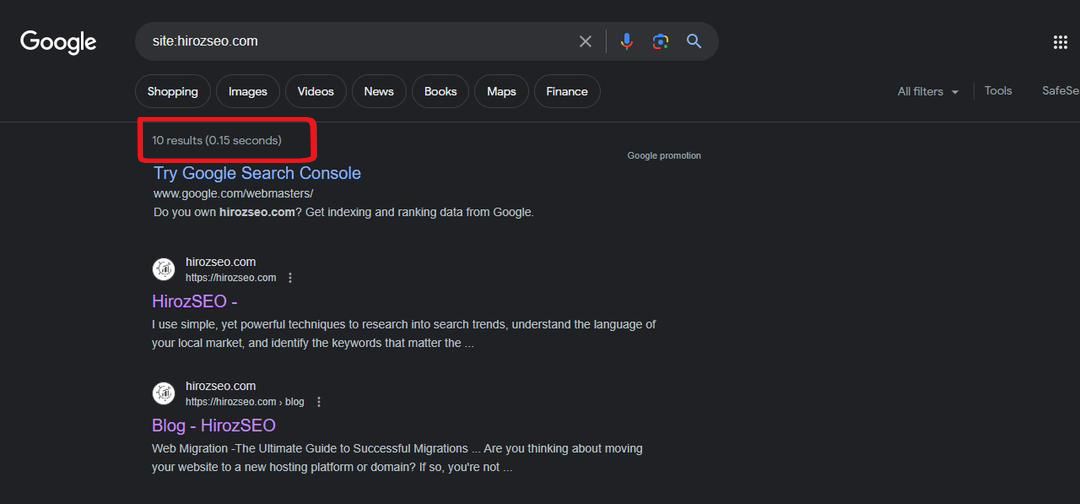

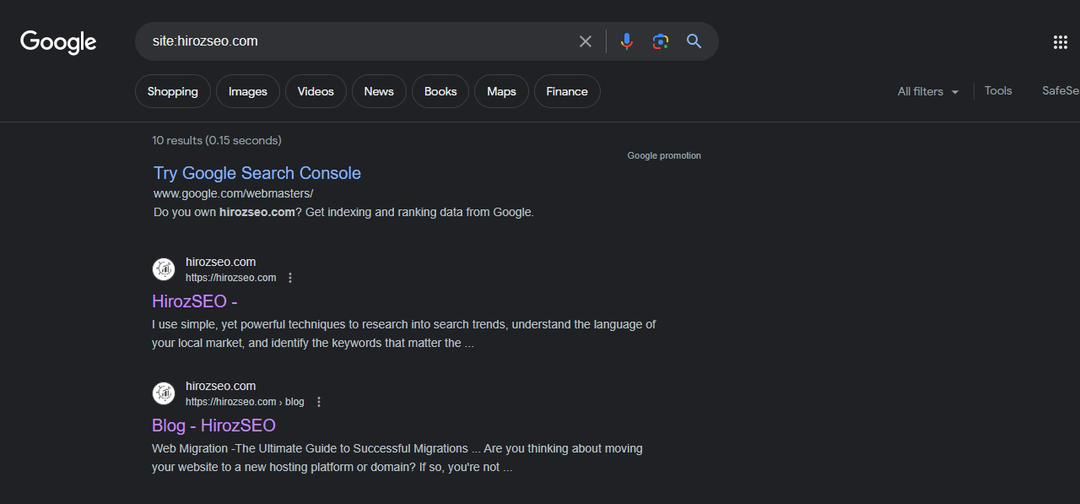

One way to find out if your webpage has an indexation issue is to use Google as your source of truth.

Conduct site a search on Google by typing “site:example.com” in the search bar.

The “result” of the site search will give you an insight into how many pages are indexed or ranked.

Here is an example of what I mean.

So, from the image above, I can see that I have about 10 pages indexed

Another way is using SEO tools like Ahref, Semrush, Screaming Frog, etc

What to think about?

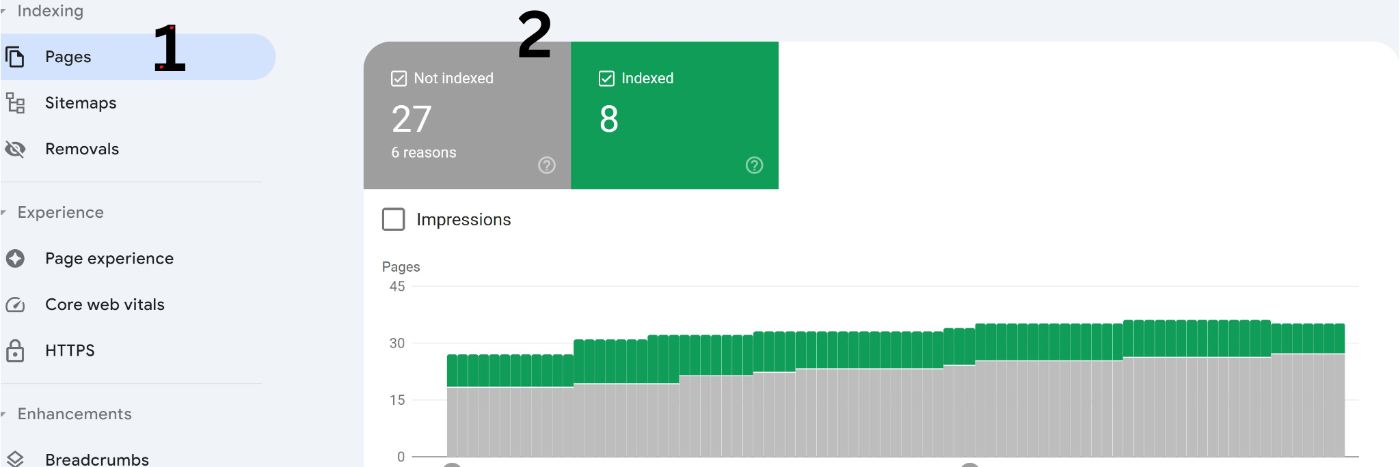

Do I see the expected number of indexed pages?

The number of pages you expect to be indexed depends on the size and structure of your website.

If you have a smaller website or excluded certain pages from indexing, then it is ok to have fewer indexed pages.

But suppose you didn't and you are seeing a lesser amount of pages. In that case, here is what you need to do

- Check for technical issues that may prevent search engines from properly indexing these pages, such as incorrect robots.txt configurations or "no index" tags accidentally applied.

You can do these by navigating to “indexed pages” and then to the “reason” why the pages aren’t indexed on Search Console.

- Additionally, consider building

internal and external links to these pages to improve their visibility and likelihood of being indexed

On the other hand, if you own large websites with a substantial amount of valuable content, you should have a higher number of indexed pages.

However, if you see more than expected, here is what you need to do

-

Look out for the affected URL using the search console or any other SEO tools.

- Consider checking your log files for spammy links

- Check to see if the older version of your site is indexed instead of the updated site

Summary of indexation issues, how to fix them, and key takeaways.

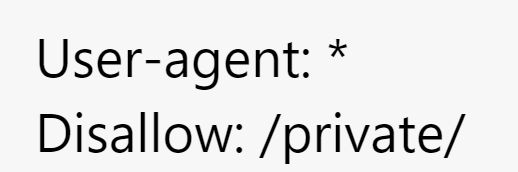

2. Robot.txt Error

Similar to indexation problems, another technical SEO issue to watch out for is the robot.txt derivative error.

Robot.txt files are derivatives that instruct search engine crawlers not to crawl a page.

Crawling is one of the first things search engines do when they come to your website.

It helps search engines understand what your website is all about.

When search engines can't crawl your website, it won't be indexed and subsequently won't rank.

Here is an example of what a disallow statement looks like.

In this example, the first line "User-agent: *" indicates that the directives that follow apply to all search engine crawlers(Google, Bing, etc).

The second line "Disallow: /private/" specifies that the "private" directory should not be crawled or indexed by search engines. This could be used to protect sensitive information or content not intended for public access.

I have seen a lot of disallow statements done unintentionally to pages. The good news is, that you too can learn how to find them.

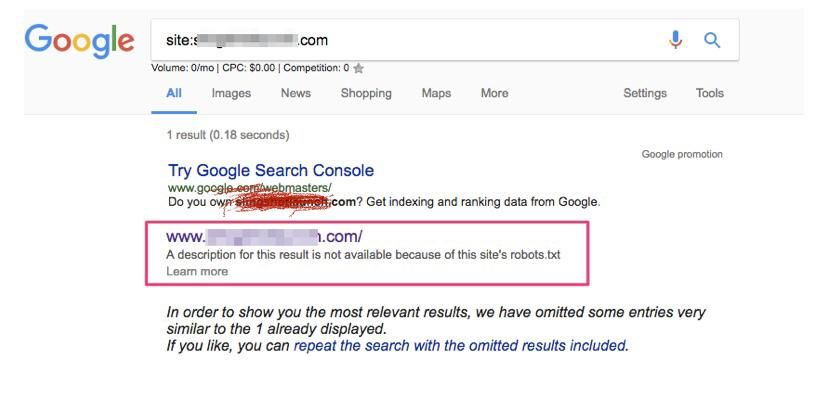

How to find and fix Robot.txt issues

To check input your site:example.com/robot.txt into the Google search bar.

Ideally, you wouldn’t want your site to show a user agent disallow unless you requested such with your developer.

So, when you conduct a site search and your site has a “user agent disallow”, it would look like this on Search Engine Result Page (SERP).

To fix this, check with your developer to see if this tag was done by mistake.

3. Slow page speed

While robot.txt issues are technical SEO issues you should worry about, page speed issues are also worth your attention.

Page speed measures how quickly the content on your webpage loads.

It is an acknowledged ranking factor by Google, meaning it can impact your website's visibility in search engine results.

According to

“Like us, our users place a lot of value in speed—that's why we've decided to take site speed into account in our search rankings. We use a variety of sources to determine the speed of a site relative to other sites”

From a business point of view, a slow site can lead to an increased bounce rate.

A study by

Hence, you should aim for a fast site.

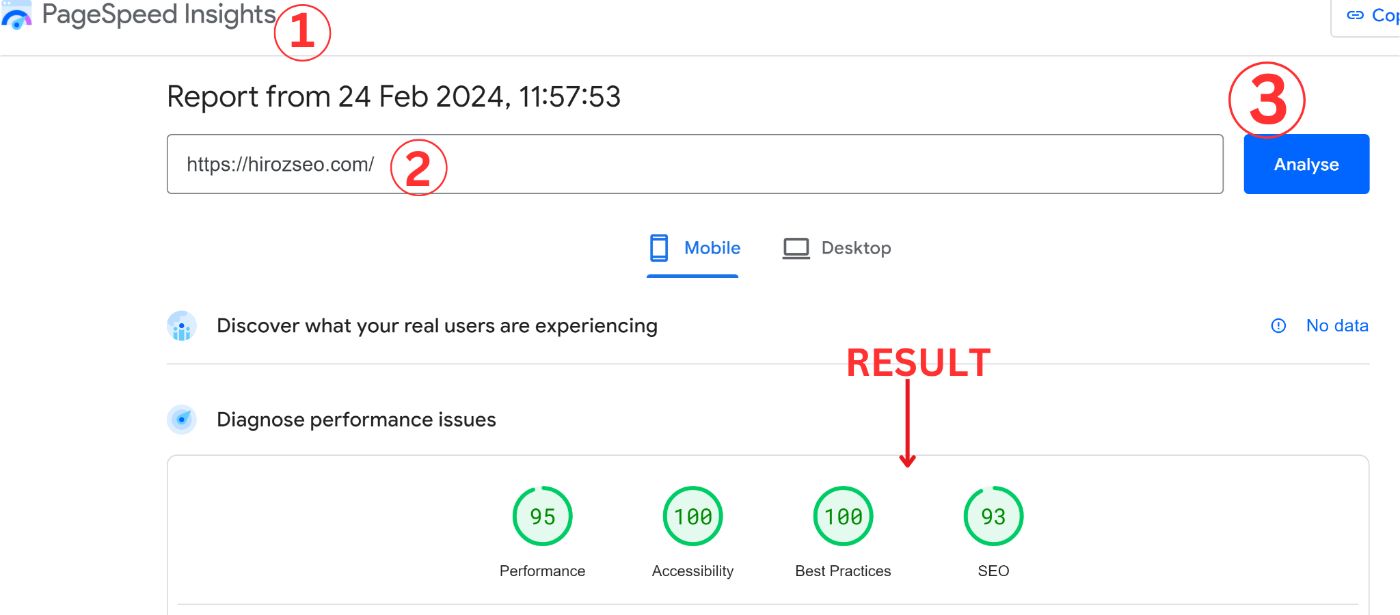

To check your site speed. You can use tools like

How to find and fix Page Speed issues

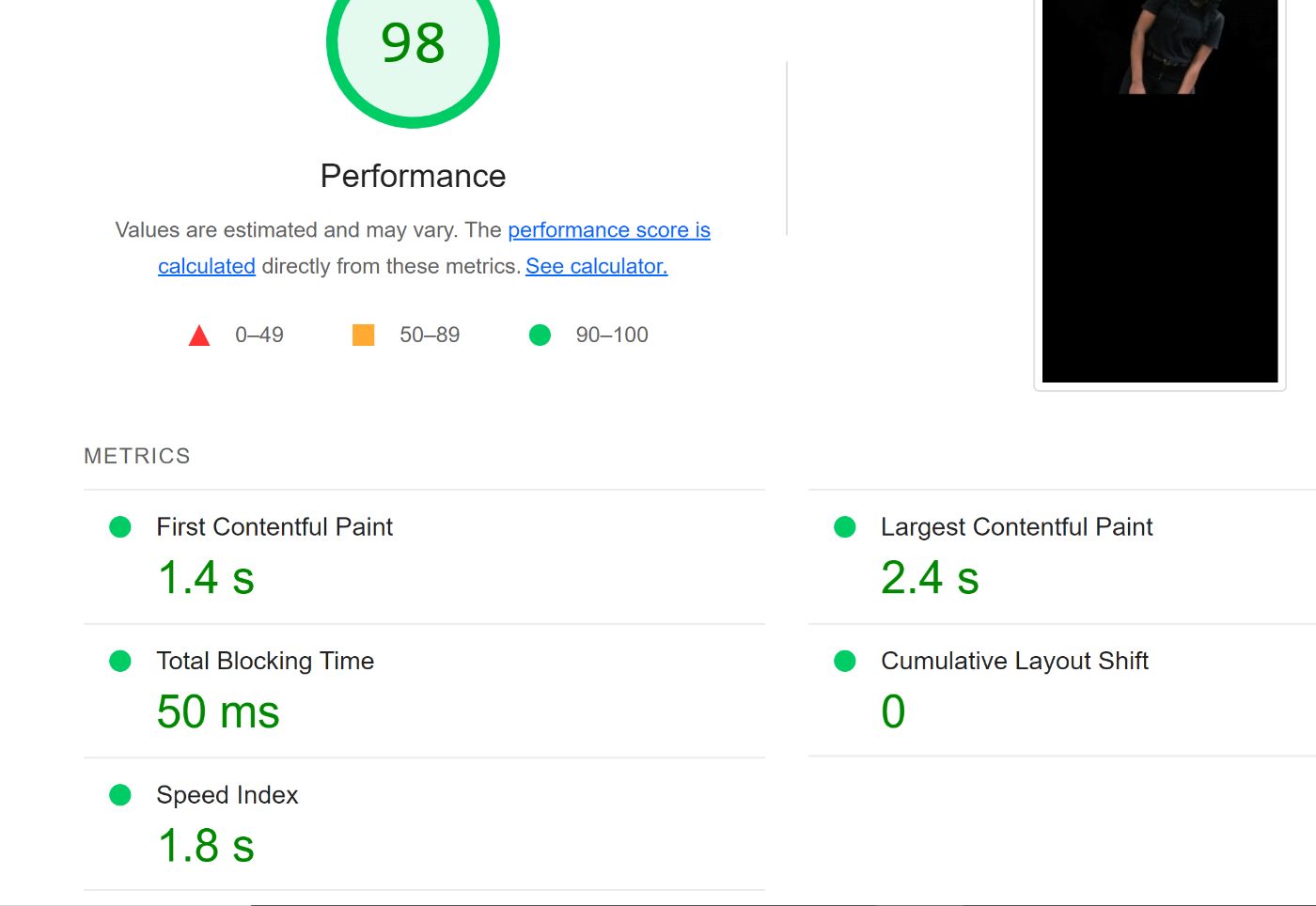

To find out if your site has page speed issues, go to

The performance section gives an overview of your page load time.

It can be evaluated using different factors, First Contentful Paint (FCP), Total Blocking Time(TBT), Largest Contentful Paint (LCP), and Cumulative Layout Shift (CLS)

FCPtells you how long it takes for your browser to display the first bit of visible content on your page after your user clicks a link or enters your website.

This content can be anything from text to images, and it helps determine how quickly your page appears to be loading for users.

For a positive user experience, Google recommends aiming for 1.8 seconds or less

LCP measures the time it takes your website to load a significant portion of your website.

For a positive user experience, Google recommends an LCP (Largest Contentful Paint) of under 2.5 seconds.

CLS measures unexpected layout shifts that occur as your page loads and becomes interactive. Layout shifts are moving page elements, such as text, images, buttons, or other content, in a way that affects your user’s experience.

These shifts can occur when resources load asynchronously, or when content with dynamic dimensions is added or resized without reserving space.

Google recommends a score of 0.1 or less

TBT is a performance metric that measures the delay experienced by users when interacting with your web page.

The higher the total blocking time, the more delayed and unresponsive the page may feel to users.

One technique you can employ is deferring non-critical JavaScript execution, you’ll need to talk to your web developers on this one.

Speed Index quantifies how quickly the content of a web page visually appears to users during the page load process. It provides a numerical value that represents the perceived loading speed of a webpage.

Unlike other performance metrics that focus on specific events or elements, the Speed Index measures the time it takes for different elements to load and render, and how they are displayed to the user.

A lower Speed Index value indicates that the page appears to load more quickly and provides a better user experience.

How to fix site speed issues

-

Compress your images Reduce the file size of your images without significant loss of quality. This can be done using image compression tools or plugins.

-

Move to a different hosting service If your web server is causing performance issues, consider switching to a hosting provider that offers better server performance, improved infrastructure, and faster response times.

-

Minimize files or code through minification Minify your CSS, JavaScript, and HTML files by removing unnecessary characters, line breaks, and whitespace.

-

Enable browser cache Configure your server to enable browser caching, which allows returning visitors to load your website faster by storing static resources (such as images, CSS, and JavaScript) locally on their device. This reduces the need to re-download files on subsequent visits

Site speed can be difficult to do on your own especially when you own a big site. That is why I advise you to work hand-in-hand with your develop

4. Site security issues

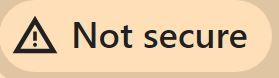

Aside from site speed, another technical SEO issue you might encounter when dealing with client sites is site security.

A secure website with proper encryption measures, such as HTTPS, assures users that their data is transmitted safely and reduces the risk of unauthorized access or data breaches.

How to find and fix site security issues

To find out if your website lacks security, type in the domain name into Google search.

An unsecured URL will show a warning “not secure” from Google.

If you need to transition your website to HTTPS, you'll need to obtain an SSL certificate from a certificate authority like WordPress.

Once you purchase and install the certificate, your website will be secure

5. Multiple domain version

Another technical SEO issue to pay attention to is multiple domain versions.

Multiple domain version is a situation where your website has a duplicate of a particular URL. For example,

While your users won’t care which version of your website gets shown to them, search engines do.

When multiple version exists, inbound links can be spread across different variations, diluting the overall link equity.

How to find and fix multiple domain versions

Use the "site:" operator in Google search, along with your domain name (e.g., "site:example.com"). This will display the indexed versions of your pages.

Next, review the results to identify any inconsistencies or multiple versions of your URLs that are being indexed.

Work with your developer to set up 301 redirects from your non-preferred to your preferred version.

The 301 redirect will automatically direct users and search engines to the preferred version and consolidate link equity.

6. Duplicate pages

While multiple domain versions share similarities with duplicate pages, it has distinct differences.

Duplicate pages are pages with the same URL structure but slight variations

It is common in e-commerce websites, particularly with category URLs and filters.

Here is an example of URLs that can be flagged as duplicates by Google

https://example.com/bag/menbag/black

https://example.com/bag/menbag/brown

Duplicate contents are resolved with a rel-canonical tag

How to find and fix

On the search console, you can check for duplicate pages by navigating to “pages”, and then “not indexed” as shown in the image below

You can also use a web crawler to generate a comprehensive list of all duplicate URLs on your site.

To fix duplicate issues add a canonical tag in the head section of the HTML code. The canonical tag should point to the preferred version of the content.

For example:

<link rel="canonical" href="

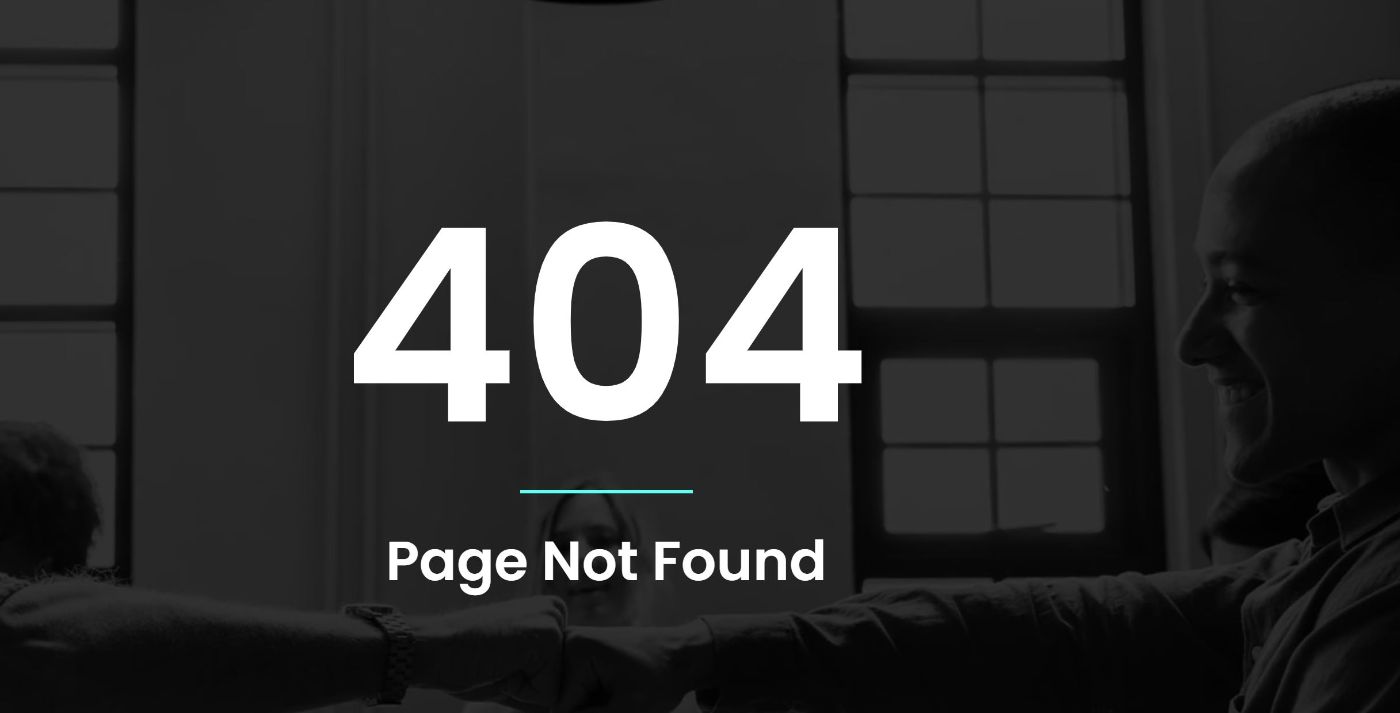

7. Broken links

This how a 404 page looks like.

While not all 404s are issues, 404 error responses with significant traffic or internal links pointing to them can affect user experience and your website's crawl budget.

How to find and fix

To address this issue, you need to identify pages that may be generating 404 errors by using tools like Google Search Console and web crawlers like Screaming Frog and Lumar.

In Google Search Console, navigate to “pages”, and then click on “not indexed” like the image below.

You will see something like this. Next, click on 404 not found to see the affected URLs.

You can then update or redirect to a new URL.

8. Redirect chain issues

While broken links are important to fix, another technical SEO issue that can affect your rankings is redirects.

One often overlooked cause of increased load time is excessive redirect chain. Each redirect adds extra time to the page load.

In a study conducted by

This can lead to an increased bounce rate for your users from the business perspective.

Also, search engines might end up not indexing the destination URL.

Your redirect structure should look like this

"

OR

Redirect chains may be difficult to spot on search console but not for web crawlers.

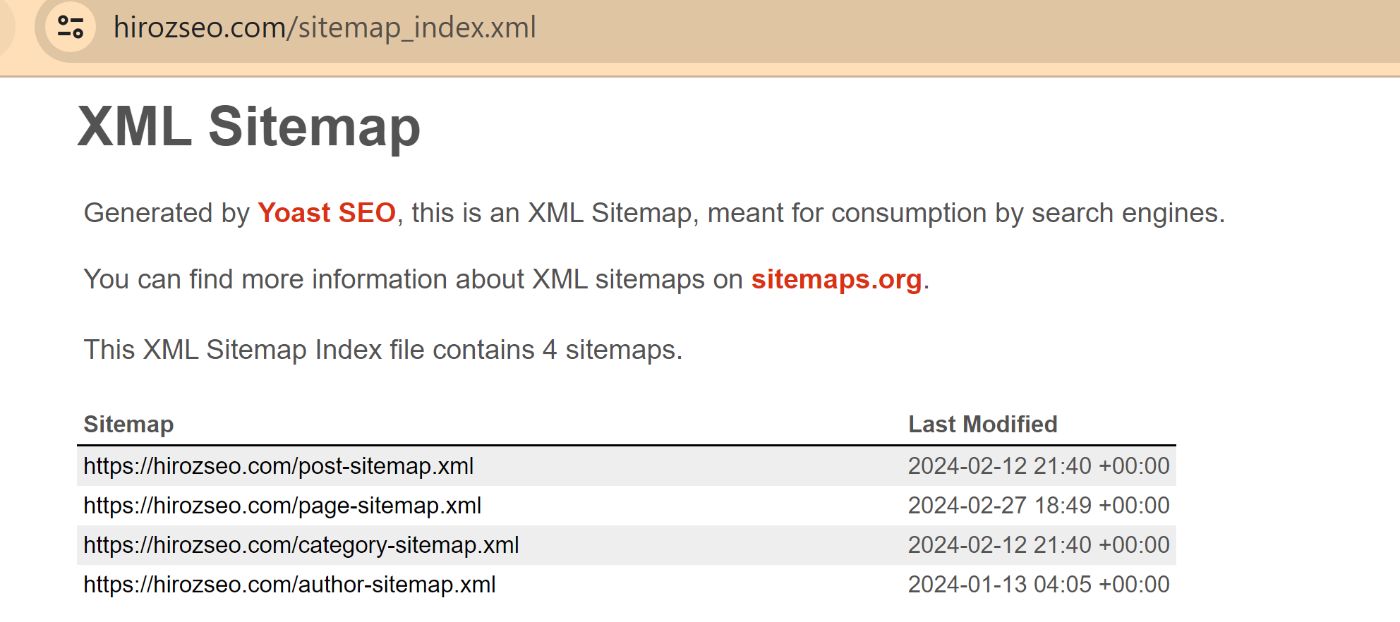

9. XML Sitemaps.

XML sitemaps play a crucial role in helping Google's search bots gain a deeper understanding of the pages on your website.

It provides a structured overview of your site's content. This, in turn, enhances the visibility and accessibility of your website in search results

Here are some common issues I've encountered with XML sitemaps while working on clients' sites:

- Not creating an XML sitemap in the first place.

- Presence of outdated versions of the sitemap.

- Presence of broken link on the sitemap.

- Sitemap containing incomplete URLs of the website

- Duplicate versions of the sitemap exist.

How to find and fix XML Sitemap issues

To find out if your site has a sitemap or not.

Go to Google search and input your website url/sitemap.xml in the search box.

For example

You should see an image like this.

To address your sitemap problems, start by creating a sitemap if you don’t have one.

If you use a CMS platform, you can download the Yoast plugin, which automatically generates a sitemap for you.

If you use a CMS platform, you can download the Yoast plugin, which automatically generates a sitemap for you.

Also, be sure to read

As a rule of thumb

- Your sitemap should be without broken links

- It should be without excessive redirect chain

- It should contain updated URLs

I recommend checking out this detailed guide by Sitebulb to learn

How to prioritize technical SEO issues

It's common to feel tempted to fix every issue discovered on your website at once.

I can relate to that temptation myself.

However, I've learned the importance of prioritizing fixes instead of trying to tackle everything simultaneously.

When you prioritize fixes, you address the most critical issues first and gradually work through the rest.

So when trying to fix technical SEO issues, I like to categorize them into two

- Classification of issues based on the pressing goals

- Classification based on the type of issue.

1. Based on pressing goals

When it comes to pressing goals, I'm referring to short-term issues that require quick attention.

Let's say I work with an e-commerce brand like Brand X, which sells probiotic supplements.

And, we identified two short-term goals

- Sell x amount of probiotics for the next seasonal demand.

- Cite a new store in a different Geo location.

Say we are approaching a season of high demand for the product I will pick the first option.

My goal would be to enhance the ranking potential of relevant pages, whether it's maintaining the current page rankings or improving the pages with potential.

To achieve this, I will prioritize the pages that receive the highest clicks and engagement.

Additionally, I focus on low-hanging fruit, such as pages that are on the second page of search engine results pages (SERPs), as they have the potential for quick improvements.

2. Based on the type of issue.

Next, I identify the specific issues affecting my priority pages. For instance, I may come across crawlability and indexation issues, as well as keyword-related problems on the target URLs.

To ensure a systematic approach, I will prioritize crawlability and indexation issues before tackling content-related issues.

This ensures that search engines can easily crawl and index the pages, which lays a strong foundation for improved rankings.