A few days ago, I underwent an MRI scan. They slid me into a large tube, and for 15 minutes, the machine around me buzzed, hummed, and clicked. At the end of the examination, I received a CD containing the data. What does a good developer do in such a situation? Of course, as soon as they get home, they start examining the data and thinking about how they might extract it using Python.

The CD contained a bunch of DLLs, an EXE, and a few additional files for a Windows viewer, which are obviously useless on Linux. The crux was in a folder named DICOM. It held a series of extension-less files across eight folders. ChatGPT helped me figure out thatDICOM is a standard format typically used for storing MRI, CT, and X-ray images. Essentially, it's a simple packaging format containing grayscale images along with some metadata.

Thefollowing code allows you to easily export the files in PNG format:

import os

import pydicom

from PIL import Image

import sys

def save_image(pixel_array, output_path):

if pixel_array.dtype != 'uint8':

pixel_array = ((pixel_array - pixel_array.min()) / (pixel_array.max() - pixel_array.min()) * 255).astype('uint8')

img = Image.fromarray(pixel_array)

img.save(output_path)

print(f"Saved image to {output_path}")

def process_dicom_directory(dicomdir_path, save_directory):

if not os.path.exists(save_directory):

os.makedirs(save_directory)

dicomdir = pydicom.dcmread(dicomdir_path)

for patient_record in dicomdir.patient_records:

if 'PatientName' in patient_record:

print(f"Patient: {patient_record.PatientName}")

for study_record in patient_record.children:

if 'StudyDate' in study_record:

print(f"Study: {study_record.StudyDate}")

for series_record in study_record.children:

for image_record in series_record.children:

if 'ReferencedFileID' in image_record:

file_id = list(map(str, image_record.ReferencedFileID))

file_id_path = os.path.join(*file_id)

print(f"Image: {file_id_path}")

image_path = os.path.join(os.path.dirname(dicomdir_path), file_id_path)

modified_filename = '_'.join(file_id) + '.png'

image_save_path = os.path.join(save_directory, modified_filename)

try:

img_data = pydicom.dcmread(image_path)

print("DICOM image loaded successfully.")

save_image(img_data.pixel_array, image_save_path)

except FileNotFoundError as e:

print(f"Failed to read DICOM file at {image_path}: {e}")

except Exception as e:

print(f"An error occurred: {e}")

if __name__ == "__main__":

if len(sys.argv) < 3:

print("Usage: python dicomsave.py <path_to_DICOMDIR> <path_to_save_images>")

sys.exit(1)

dicomdir_path = sys.argv[1]

save_directory = sys.argv[2]

process_dicom_directory(dicomdir_path, save_directory)

The code expects two parameters. The first is the path to the DICOMDIR file, and the second is a directory where the images will be saved in PNG format.

To read the DICOM files, we use the Pydicom library. The structure is loaded using the pydicom.dcmread function, from which metadata (such as the patient's name) and studies containing the images can be extracted. The image data can also be read with dcmread. The raw data are accessible through the pixelarray field, which the save_image function converts to PNG. It's not too complicated.

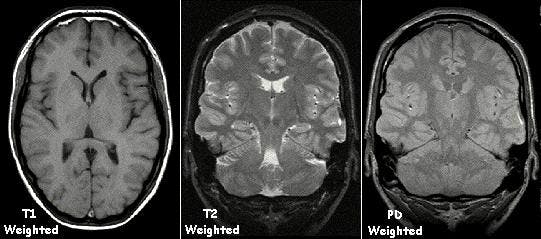

The result is a few squares (typically 512x512) grayscale images, which can be easily utilized later on.

Why did I think it was important to write this short story about my adventure using the DICOM format? When a programmer hears about processing medical data, they might think it's something serious, something that only universities and research institutes can handle (at least, that's what I thought). As you can see, we're talking about simple grayscale images, which are very easy to process and ideal for things like neural network processing.

Consider the possibilities this opens up. For something like a simple tumor detector, all you need is a relatively straightforward convolutional network and a sufficient number of samples. In reality, the task isn't much different from recognizing dogs or cats in images.

To illustrate how true this is, let me provide an example. After a brief search on Kaggle, I found a notebook where brain tumors are classified based on MRI data (grayscale images). The dataset categorizes the images into four groups: three types of brain tumors and a fourth category with images of healthy brains. The architecture of the network is as follows:

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━┓

┃ Layer (type) ┃ Output Shape ┃ Param # ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━┩

│ conv2d (Conv2D) │ (None, 164, 164, 64) │ 1,664 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ max_pooling2d (MaxPooling2D) │ (None, 54, 54, 64) │ 0 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ conv2d_1 (Conv2D) │ (None, 50, 50, 64) │ 102,464 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ max_pooling2d_1 (MaxPooling2D) │ (None, 16, 16, 64) │ 0 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ conv2d_2 (Conv2D) │ (None, 13, 13, 128) │ 131,200 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ max_pooling2d_2 (MaxPooling2D) │ (None, 6, 6, 128) │ 0 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ conv2d_3 (Conv2D) │ (None, 3, 3, 128) │ 262,272 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ max_pooling2d_3 (MaxPooling2D) │ (None, 1, 1, 128) │ 0 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ flatten (Flatten) │ (None, 128) │ 0 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ dense (Dense) │ (None, 512) │ 66,048 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ dense_1 (Dense) │ (None, 4) │ 2,052 │

└─────────────────────────────────┴────────────────────────┴───────────────┘

As you can see, the first few layers consist of a convolution and max pooling sandwich, which extracts patterns of tumors, followed by two dense layers that perform the classification. This is exactly the same architecture used in TensorFlow's CNN sample code that uses the CIFAR-10 dataset. I remember it well, as it was one of the first neural networks I encountered. The CIFAR-10 dataset contains 60,000 images categorized into ten classes, including two classes for dogs and cats, as I mentioned earlier. The architecture of the network looks like this:

Model: “sequential”

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d (Conv2D) (None, 30, 30, 32) 896

_________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 15, 15, 32) 0

_________________________________________________________________

conv2d_1 (Conv2D) (None, 13, 13, 64) 18496

_________________________________________________________________

max_pooling2d_1 (MaxPooling2 (None, 6, 6, 64) 0

_________________________________________________________________

conv2d_2 (Conv2D) (None, 4, 4, 64) 36928

_________________________________________________________________

flatten (Flatten) (None, 1024) 0

_________________________________________________________________

dense (Dense) (None, 64) 65600

_________________________________________________________________

dense_1 (Dense) (None, 10) 650

=================================================================

Total params: 122,570

Trainable params: 122,570

Non-trainable params: 0

_________________________________________________________________

It's clear that we are dealing with the same architecture, just with slightly different parameters, so recognizing brain tumors in MRI images is indeed not much more difficult than identifying cats or dogs in pictures. Furthermore,the mentioned Kaggle notebook is capable of classifying tumors with 99% effectiveness!

I just want to point out how simple it can be to extract and process medical data in certain cases and how problems that seem complex, like detecting brain tumors, can often be quite effectively addressed with simple, traditional solutions.

Therefore, I would encourage everyone not to shy away from medical data. As you can see, we are dealing with relatively simple formats, and using relatively simple, well-established technologies (convolutional networks, vision transformers, etc.), a significant impact can be achieved here. If you join an open-source healthcare project in your spare time just as a hobby and can make even a slight improvement to the neural network used, you could potentially save lives!