The War Robots celebrates its 10-year anniversary this April! And to this day, we continue developing and supporting it, not only releasing new features for our players but also improving it technically.

In this article, we’ll discuss our many years of experience in the technical development of this large project. But first, here’s a snapshot of the project as it stands:

- Hundreds of thousands of DAU (Daily Active Users)

- Hundreds of millions of installations

- Tens of thousands of simultaneous 12-person matches

- Available on four major platforms (iOS, Android, Steam, Amazon)

- Tens of thousands of supported devices, including Android and PC

- Hundreds of servers

- Approximately 20 client developers, 9 server developers, a cross-project development team of 8 people, and 3 DevOps

- About 1.5 million lines of client-side code

- Using Unity starting from version 4 in 2014 and currently using version 2022 LTS in 2024.

To maintain the functionality of this kind of project and ensure further high-quality development, working only on immediate product tasks is not enough; it’s also key to improve its technical condition, simplify development, and automate processes – including those related to the creation of new content. Further, we must constantly adapt to the changing market of available user devices.

The text is based on interviews with Pavel Zinov, Head of Development Department at Pixonic (MY.GAMES), and Dmitry Chetverikov, Lead Developer of War Robots.

The beginning

Let's go back to 2014: the War Robots project was initially created by a small team, and all the technical solutions fit into the paradigm of rapid development and delivery of new features to the market. At this time, the company didn’t have large resources to host dedicated servers, and thus, War Robots entered the market with a network multiplayer based on P2P logic.

Photon Cloud was used to transfer data between clients: each player command, be it moving, shooting, or any other command, was sent to the Photon Cloud server using separate RPCs. Since there was no dedicated server, the game had a master client, which was responsible for the reliability of the match state for all players. At the same time, the remaining players fully processed the state of the entire game on their local clients.

As an example, there was no verification for movement — the local client moved its robot as it saw fit, this state was sent to the master client, and the master client unconditionally believed this state and simply forwarded it to other clients in the match. Each client independently kept a log of the battle and sent it to the server at the end of the match, then the server processed the logs of all players, awarded a reward, and sent the results of the match to all players.

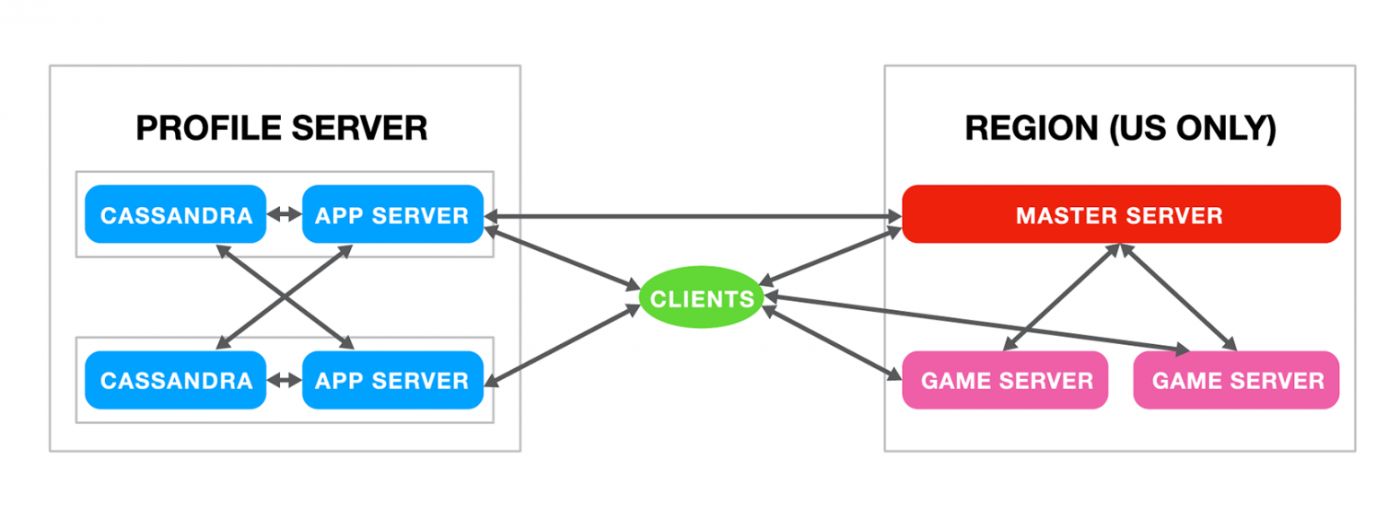

We used a special Profile Server to store information about player profiles. This consisted of many servers with a Cassandra database. Each server was a simple app server on Tomcat, and clients interacted with it via HTTP.

Drawbacks of our initial approach

The approach used in the gameplay had a number of disadvantages. The team, of course, knew about these, but due to the speed of development and delivery of the final product to the market, a number of compromises had to be made.

Among these disadvantages, first of all, was the quality of the master client connection. If the client had a bad network, then all the players in a match experienced lag. And, if the master client was not running on a very powerful smartphone, then, due to the high load placed on it, the game also experienced a delay in data transfer. So, in this case, in addition to the master client, other players suffered as well.

The second drawback was that this architecture was prone to problems with cheaters. Since the final state was transferred from the master client, this client could change many match parameters at its discretion, for example, counting the number of captured zones in the match.

Third, a problem exists with matchmaking functionality on Photon Cloud: the oldest player in the Photon Cloud Matchmaking room becomes the master client, and if something happens to them during matchmaking (for example, a disconnection), then group creation is negatively impacted. Related fun fact: to prevent matchmaking in Photon Cloud from closing after sending a group to a match, for some time, we even set up a simple PC in our office, and this always supported matchmaking, meaning that matchmaking with Photon Cloud could not fail.

At some point, the number of problems and support requests reached a critical mass, and we started seriously thinking about evolving the technical architecture of the game – this new architecture was called Infrastructure 2.0.

The transition to Infrastructure 2.0

The main idea behind this architecture was the creation of dedicated game servers. At that time, the client’s team lacked programmers with extensive server development experience. However, the company was simultaneously working on a server-based, high-load analytics project, which we called AppMetr. The team had created a product that processed billions of messages per day, and they had extensive expertise in the development, configuration, and correct architecture of server solutions. And so, some members of that team joined the work on Infrastructure 2.0.

In 2015, within a fairly short period of time, a .NET server was created, wrapped in the Photon Server SDK framework running on Windows Server. We decided to abandon Photon Cloud, keeping only the Photon Server SDK network framework in the client project and created a Master Server for connecting clients and corresponding servers for matchmaking. Some of the logic was moved from the client to a dedicated game server. In particular, basic damage validation was introduced, as well as putting match result calculations on the server.

The emergence of microservices

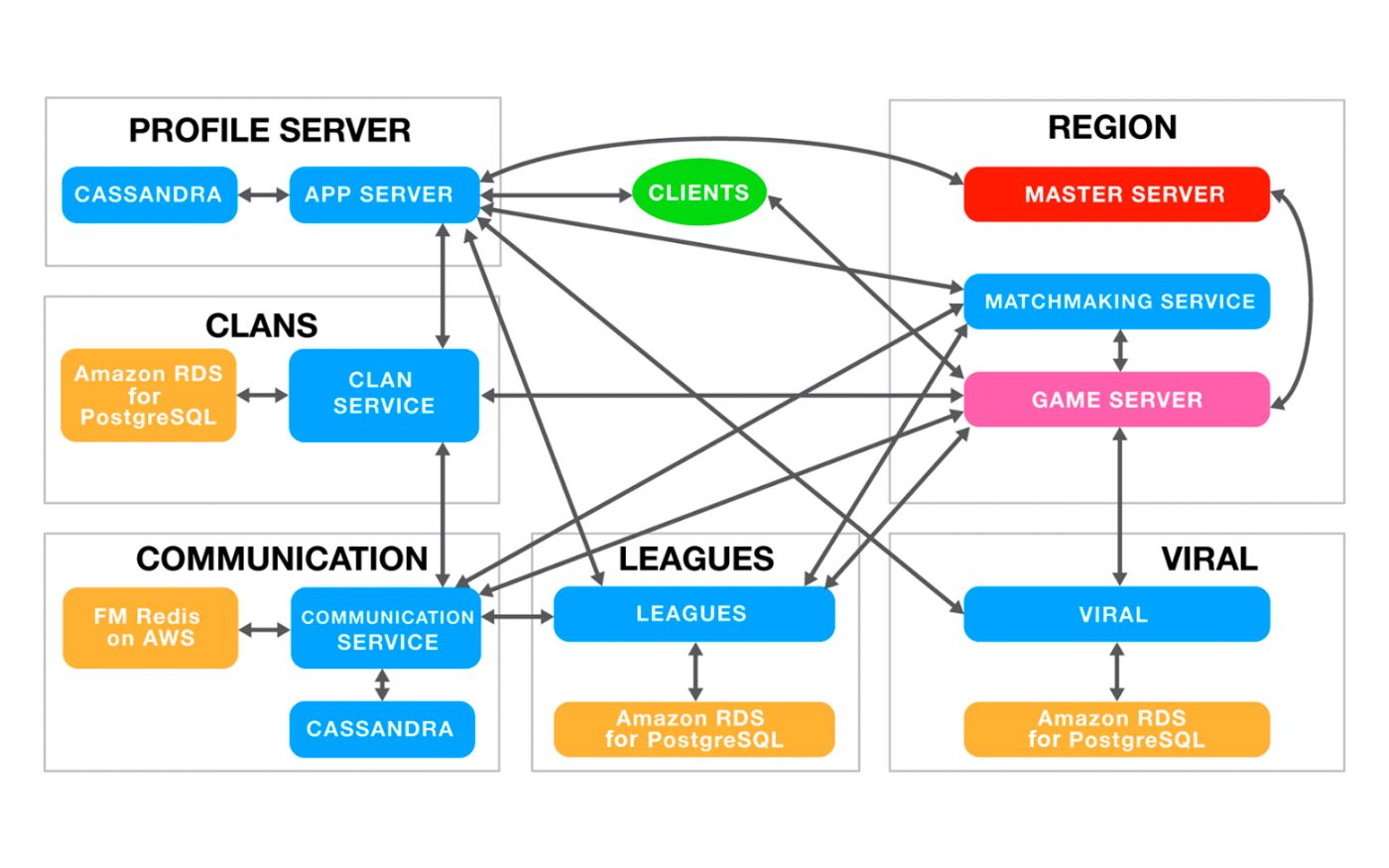

After successfully creating Infrastructure 2.0, we realized that we needed to continue moving towards decoupling the responsibilities of services: the result of this was the creation of microservice architecture. The first microservice created on this architecture was clans.

From there, a separate Communication service appeared, and this was responsible for transferring data between microservices. The client was taught to establish a connection with the “hangar” responsible for meta mechanics on the game server and to make API requests (a single entry point for interacting with services) using UDP over Photon Cloud. Gradually, the number of services grew and, as a result, we refactored the matchmaking microservice. With this, the news functionality, Inbox, was created.

When we created clans, it was clear that players wanted to communicate with each other in the game – they needed some sort of chat. This was originally created based on Photon Chat, but the entire chat history was erased as soon as the last client disconnected. Therefore, we converted this solution into a separate microservice based on the Cassandra database.

Infrastructure 4.0 and server locations

The new microservice architecture allowed us to conveniently manage a large number of services that were independent of each other, which meant horizontally scaling the entire system and avoiding downtimes.

In our game, updates occurred without the need to pause servers. The server profile was compatible with several versions of clients, and as for game servers, we always have a pool of servers for the old and new versions of the client, this pool gradually shifted after the release of a new version in application stores, and game servers disappeared over time from the old version. The general outline of the current infrastructure was called Infrastructure 4.0 and looked like this:

In addition to changing the architecture, we also faced a dilemma about where our servers were to be located since they were supposed to cover players from all over the world. Initially, many microservices were located in Amazon AWS for a number of reasons, in particular, because of the flexibility this system offers in terms of scaling, as this was very important during moments of gaming traffic surge (like when it was featured in stores and to boost UA). Additionally, back then, it was difficult to find a good hosting provider in Asia that would provide good network quality and connection with the outside world.

The only downside to Amazon AWS was its high cost. Therefore, over time, many of our servers moved to our own hardware – servers that we rent in data centers around the world. Nonetheless, Amazon AWS remained an important part of the architecture, as it allowed for the development of code that was constantly changing (in particular, the code for the Leagues, Clans, Chats, and News services) and which was not sufficiently covered by stability tests. But, as soon as we realized that the microservice was stable, we moved it to our own facilities. Currently, all our microservices run on our hardware servers.

Device quality control and more metrics

In 2016, important changes were made to the game's rendering: the concept of Ubershaders with a single texture atlas for all mechs in battle appeared, which greatly reduced the number of draw calls and improved game performance.

It became clear that our game was being played on tens of thousands of different devices, and we wanted to provide each player with the best possible gaming conditions. Thus, we created Quality Manager, which is basically a file that analyzes a player's device and enables (or disables) certain game or rendering features. Moreover, this feature allowed us to manage these functions down to the specific device model so we could quickly fix problems our players were experiencing.

It’s also possible to download Quality Manager settings from the server and dynamically select the quality on the device depending on the current performance. The logic is quite simple: the entire body of the Quality Manager is divided into blocks, each of which is responsible for some aspect of performance. (For example, the quality of shadows or anti-aliasing.) If a user's performance suffers, the system tries to change the values inside the block and select an option that will lead to an increase in performance. We will return to the evolution of Quality Manager a little later, but at this stage, its implementation was quite fast and provided the required level of control.

Work on Quality Manager was also necessary as graphics development continued. The game now features cascading dynamic shadows, implemented entirely by our graphics programmers.

Gradually, quite a lot of entities appeared in the code of the project, so we decided that it would be good to start managing their lifetime, as well as delimiting access to different functionality. This was especially important for separating the hangar and combat code because many resources were not being cleared when the game context changed. We started exploring various IoC dependency management containers. In particular, we looked at the StrangeIoC solution. At that time, the solution seemed rather cumbersome to us, and so we implemented our own simple DI in the project. We also introduced hangar and battle contexts.

In addition, we started working on quality control of the project; FirebaseCrashlytics were integrated into the game to identify crashes, ANRs, and exceptions in the production environment.

Using our internal analytical solution called AppMetr, we created the necessary dashboards for regular monitoring of customer status and comparison of changes made during releases. Further, to improve the quality of the project and increase confidence in the changes made to the code, we began researching autotests.

The increase in the number of assets and unique bugs in the content made us think about organizing and unifying assets. This was especially true for mechs because their build was unique for each of them; for this, we created tools in Unity, and with these, we made mech dummies with the main necessary components. This meant that the game design department could then easily edit them – and this was how we first began unifying our work with mechs.

More platforms

The project continued to grow, and the team began to think about releasing it on other platforms. Also, as another point, for some mobile platforms at that time, it was important that the game supported as many native functions of the platform as possible, as this allowed the game to be featured. So, we started working on an experimental version of War Robots for Apple TV and a companion app for Apple Watch.

Additionally, in 2016, we launched the game on a platform that was new to us — the Amazon AppStore. In terms of technical characteristics, this platform is unique because it has a unified line of devices, like Apple, but the power of this line is at the level of low-end Androids. With this in mind, when launching on this platform, significant work was done to optimize memory use and performance, for instance, we worked with atlases, texture compression. The Amazon payment system, an analogue of Game Center for login and achievements, was integrated into the client, and therefore the flow of working with the player’s login was redone, and the first achievements were launched.

It’s also worth noting that, simultaneously, the client team developed a set of tools for the Unity Editor for the first time, and these made it possible to shoot in-game videos in the engine. This made it easier for our marketing department to work with assets, control combat, and utilize the camera to create the videos that our audience loved so much.

Fighting against cheaters

Due to the absence of Unity, and in particular the physics engine on the server, most of the matches continued to be emulated on player devices. Because of this, problems with cheaters persisted. Periodically, our support team received feedback from our users with videos of cheaters: they sped up at a convenient time, flew around the map, killed other players from around a corner, became immortal, and so on.

Ideally, we would transfer all the mechanics to the server; however, in addition to the fact that the game was in constant development and had already accumulated a fair amount of legacy code, an approach with an authoritative server might also have other disadvantages. For example, increasing the load on the infrastructure and the demand for a better Internet connection. Given that most of our users played on 3G/4G networks, this approach, although obvious, was not an effective solution to the problem. As an alternative approach to combating cheaters within the team, we came up with a new idea – creating a “quorum.”

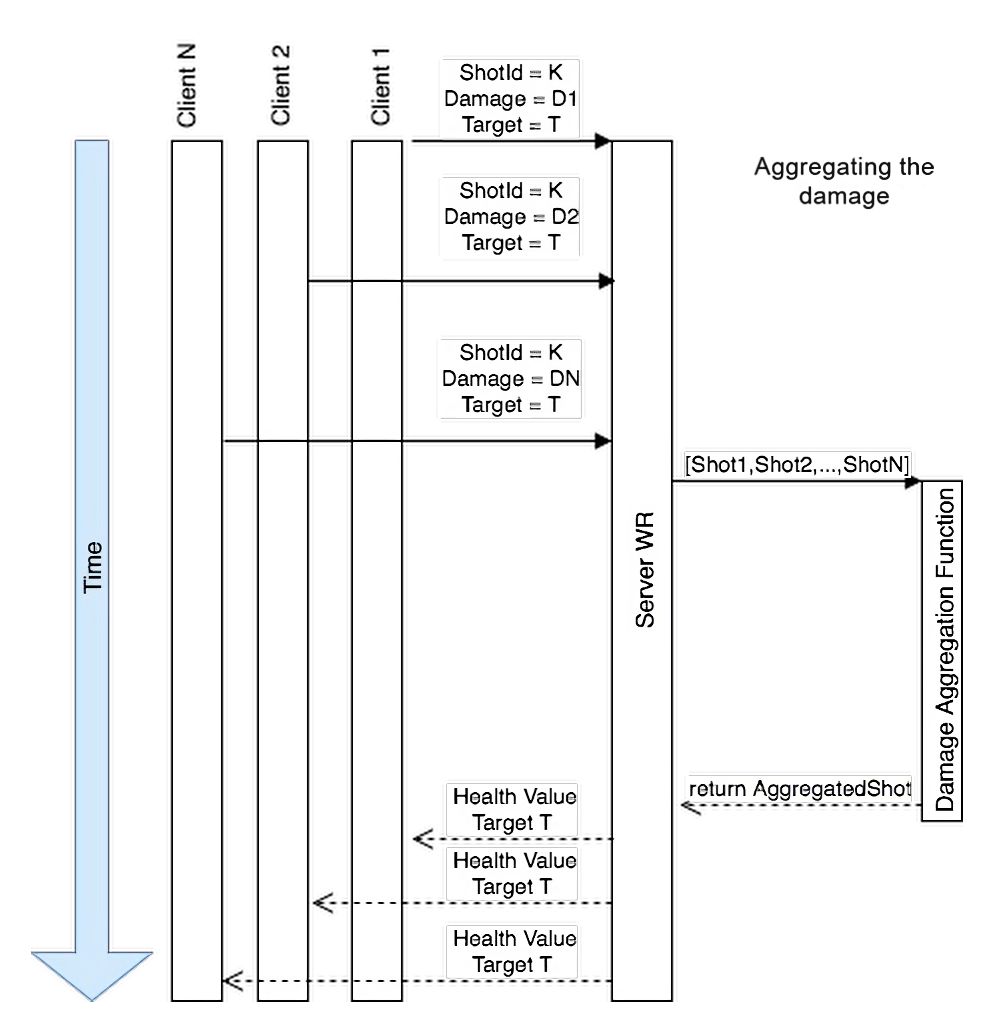

Quorum is a mechanism that allows you to compare multiple simulations of different players when confirming damage done; taking damage is one of the main features of the game, on which virtually the rest of its state depends. For example, if you destroy your opponents, they will not be able to capture beacons.

The idea of this decision was as follows: each player was still simulating the entire world (including shooting other players) and sending the results to the server. The server analyzed the results from all players and made a decision on whether the player was ultimately damaged and to what extent. Our matches involve 12 people, so for the server to consider that damage has been done, it’s enough for this fact to be registered within the local simulations of 7 players. These results would then be sent to the server for further validation. Schematically, this algorithm can be represented as follows:

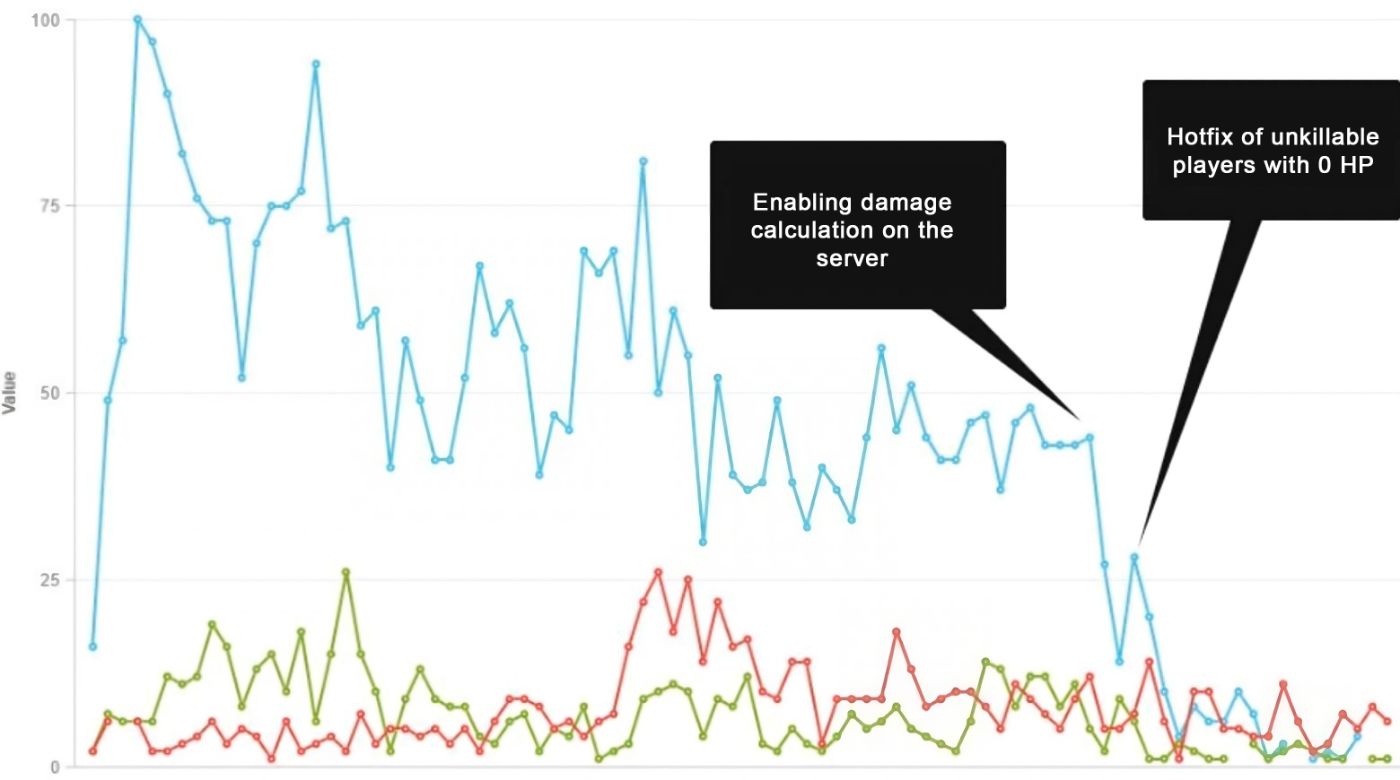

This scheme allowed us to significantly reduce the number of cheaters who made themselves unkillable in battle. The following graph shows the number of complaints against these users as well as the stages of enabling damage calculation features on the server and enabling damage quorum:

This damage-dealing mechanism solved problems with cheating for a long time because, despite the lack of physics on the server (and therefore the inability to take into account such things as surface topography and the real position of robots in space), the results of the simulation for all clients and their consensus gave an unambiguous understanding of who caused damage to whom and under what conditions.

Further development of the project

Over time, in addition to dynamic shadows, we added post-effects to the game using the Unity Post Processing Stack and batch rendering. Examples of improved graphics as a result of applying post-processing effects can be viewed in the picture below:

To better understand what was happening in debug builds, we created a logging system and deployed a local service in the internal infrastructure that could collect logs from internal testers and make it easier to find the causes of bugs. This tool is still used for playtests by QA, and the results of its work are attached to tickets in Jira.

We also added a self-written Package Manager to the project. Initially, we wanted to improve the work with various packages and external plugins (of which the project had already accumulated a sufficient number). However, Unity’s functionality was not enough at that time, so our internal Platform team developed its own solution with package versioning, storage using NPM, and the ability to connect packages to the project simply by adding a link to GitHub. Since we still wanted to use a native engine solution, we consulted with colleagues from Unity, and a number of our ideas were included in Unity's final solution when they released their Package Manager in 2018.

We continued to expand the number of platforms where our game was available. Eventually, Facebook Gameroom, a platform that supports keyboard and mouse, appeared. This is a solution from Facebook (Meta), which allows users to run applications on a PC; essentially, it’s a Steam store analog. Of course, the Facebook audience is quite diverse, and Facebook Gameroom was launched on a large number of devices, mainly non-gaming ones. So, we decided to keep mobile graphics in the game so as not to burden the PCs of the main audience. From a technical point of view, the only significant changes the game required were the integration of the SDK, payment system, and support for keyboard and mouse using the native Unity input system.

Technically, it differed little from the build of the game for Steam, but the quality of the devices was significantly lower because the platform was positioned as a place for casual players with fairly simple devices that could run nothing more than a browser, with this in mind Facebook hoped to enter a fairly large market. The resources of these devices were limited, and the platform, in particular, had persistent memory and performance issues.

Later, the platform closed, and we transferred our players to Steam, where it was possible. For that, we developed a special system with codes for transferring accounts between platforms.

Giving War Robots in VR a try

Another 2017 event of note: the release of the iPhone X, the first smartphone with a notch. At that time, Unity didn’t support devices with notches, so we had to create a custom solution based on the UnityEngine.Screen.safeArea parameters, which forces the UI to scale and avoid different safe zones on the phone screen.

Additionally, in 2017, we decided to try something else – releasing a VR version of our game on Steam. Development lasted about six months and included testing on all the helmets available at that time: Oculus, HTC Vive, and Windows Mixed Reality. This work was carried out using the Oculus API and Steam API. In addition, a demo version for PS VR headsets was made ready, as well as support for MacOS.

From a technical point of view, it was necessary to maintain a stable FPS: 60 FPS for PS VR and 90 FPS for HTC Vive. To achieve this, self-scripted Zone Culling was used, in addition to standard Frustum and Occlusion, as well as reprojections (when some frames are generated based on previous ones).

To solve the problem of motion sickness, an interesting creative and technical solution was adopted: our player became a robot pilot and sat in the cockpit. The cabin was a static element, so the brain perceived it as some kind of constant in the world, and therefore, movement in space worked very well.

The game also had a scenario that required the development of several separate triggers, scripts, and homemade timelines since Cinemachine was not yet available in that version of Unity.

For each weapon, the logic for targeting, locking, tracking, and auto-aiming was written from scratch.

The version was released on Steam and was well received by our players. However, after the Kickstarter campaign, we decided not to continue the development of the project since the development of a full-fledged PvP shooter for VR was fraught with technical risks and the market’s unreadiness for such a project.

Dealing with our first serious bug

While we were working on improving the technical component of the game, as well as creating new and developing existing mechanics and platforms, we came across the first serious bug in the game, which negatively affected revenue when launching a new promotion. The problem was that the “Price” button was incorrectly localized as “Prize.” Most of the players were confused by this, made purchases with real currency, and then asked for refunds.

The technical problem was that, back then, all of our localizations were built into the game client. When this problem emerged, we understood that we could no longer postpone working on a solution that allowed us to update localizations. This is how a solution based on CDN and accompanying tools, such as projects in TeamCity, which automated uploading localization files to the CDN, was created.

This allowed us to download localizations from the services we used (POEditor) and upload raw data to the CDN.

After that, the server profile started setting links for each version of the client, corresponding to the localization data. When the application was launched, the profile server began sending this link to the client, and this data was downloaded and cached.

Optimizing work with data

Flash forward to 2018. As the project grew, so did the amount of data transferred from the server to the client. At the time of connecting to the server, it was necessary to download quite a lot of data about the balance, current settings, etc.

The current data was presented in XML format and serialized by the standard Unity serializer.

In addition to transferring a fairly large amount of data when launching the client, as well as when communicating with the profile server and the game server, player devices spent a lot of memory storing the current balance and serializing/deserializing it.

It became clear that in order to improve the performance and startup time of the application, it would be necessary to conduct R&D to find alternative protocols.

The results of the study on our test data are displayed in this table:

|

Protocol |

size |

alloc |

time |

|---|---|---|---|

|

XML |

2.2 MB |

18.4 MiB |

518.4 ms |

|

MessagePack (contractless) |

1.7 MB |

2 MiB |

32.35 ms |

|

MessagePack (str keys) |

1.2 MB |

1.9 MiB |

25.8 ms |

|

MessagePack (int keys) |

0.53 MB |

1.9 |

16.5 |

|

FlatBuffers |

0.5 MB |

216 B |

0 ms / 12 ms / 450 KB |

As a result, we chose the MessagePack format since migration was cheaper than switching to FlatBuffers with similar output results, especially when using MessagePack with integer keys.

For FlatBuffers (and with protocol buffers) it is necessary to describe the message format in a separate language, generate C# and Java code, and use the generated code in the application. Since we didn't want to incur this additional cost of refactoring the client and server, we switched to MessagePack.

The transition was almost seamless, and in the first releases, we supported the ability to roll back to XML until we were convinced that there were no problems with the new system. The new solution covered all our tasks – it significantly reduced client loading time and also improved performance when making requests to servers.

Each feature or new technical solution in our project is released under a “flag.” This flag is stored in the player's profile and comes to the client when the game starts, along with the balance. As a rule, when a new functionality is released, especially a technical one, several client releases contain both functionalities – old and new. New functionality activation occurs strictly in accordance with the state of the flag described above, which allows us to monitor and adjust the technical success of a particular solution in real-time.

Gradually, it was time to update the Dependency Injection functionality in the project. The current one could no longer cope with a large number of dependencies and, in some cases, introduced non-obvious connections that were very difficult to break and refactor. (This was especially true for innovations related to the UI in one way or another.)

When choosing a new solution, the choice was simple: the well-known Zenject, which had been proven in our other projects. This project has been in development for a long time, is actively supported, new features are being added, and many developers in the team were familiar with it in some way.

Little by little, we started rewriting War Robots using Zenject. All the new modules were developed with it, and the old ones were gradually refactored. Since using Zenject, we’ve received a clearer sequence of loading services within the contexts that we discussed earlier (combat and hangar), and this allowed developers to more easily dive into the development of the project, as well as more confidently develop new features within these contexts.

In relatively new versions of Unity, it became possible to work with asynchronous code via async/await. Our code already used asynchronous code, but there was no single standard across the codebase, and since the Unity scripting backend began to support async/await, we decided to conduct R&D and standardize our approaches to asynchronous code.

Another motivation was to remove callback hell from the code – this is when there is a sequential chain of asynchronous calls, and each call waits for the results of the next one.

At that time, there were several popular solutions, such as RSG or UniRx; we compared them all and compiled them into a single table:

|

RSG.Promise |

TPL |

TPL w/await |

UniTask |

UniTask w/async |

|

|---|---|---|---|---|---|

|

Time until finished, s |

0.15843 |

0.1305305 |

0.1165172 |

0.1330536 |

0,1208553 |

|

First frame Time/Self, ms |

105.25/82.63 |

13.51/11.86 |

21.89/18.40 |

28.80/24.89 |

19.27/15.94 |

|

First frame allocations |

40.8 MB |

2.1 MB |

5.0 MB |

8.5 MB |

5.4 MB |

|

Second frame Time/Self, ms |

55.39/23.48 |

0.38/0.04 |

0.28/0.02 |

0.32/0.03 |

0.69/0.01 |

|

Second frame allocations |

3.1 MB |

10.2 KB |

10.3 KB |

10.3 KB |

10.4 KB |

Ultimately, we settled on using native async/await as the standard for working with asynchronous C# code that most developers are familiar with. We decided not to use UniRx.Async, since the advantages of the plugin did not cover the need to rely on a third-party solution.

We chose to follow the path of the minimum required functionality. We abandoned the use of RSG.Promise or holders, because, first of all, it was necessary to train new developers to work with these, usually unfamiliar tools, and second, it was necessary to create a wrapper for the third-party code that uses async Tasks in RSG.Promise or holders. The choice of async/await also helped to standardize the development approach between the company’s projects. This transition solved our problems – we ended up with a clear process for working with asynchronous code and removed the difficult-to-support callback hell from the project.

The UI was gradually improved. Since in our team, the functionality of new features is developed by client programmers, and layout and interface development are carried out by the UI/UX department, we needed a solution that would allow us to parallelize work on the same feature – so that the layout designer could create and test the interface while the programmer writes the logic.

The solution to this problem was found in the transition to the MVVM model of working with the interface. This model allows the interface designer not only to design an interface without involving a programmer but also to see how the interface will react to certain data when no actual data has yet been connected. After some research into off-the-shelf solutions, as well as rapid prototyping of our own solution called Pixonic ReactiveBindings, we compiled a comparison table with the following results:

|

CPU time |

Mem. Alloc |

Mem. Usage |

|

|---|---|---|---|

|

Peppermint Data Binding |

367 ms |

73 KB |

17.5 MB |

|

DisplayFab |

223 ms |

147 KB |

8.5 MB |

|

Unity Weld |

267 ms |

90 KB |

15.5 MB |

|

Pixonic ReactiveBindings |

152 ms |

23 KB |

3 MB |

As soon as the new system had proven itself, primarily in terms of simplifying the production of new interfaces, we started using it for all new projects, as well as all new features appearing in the project.

Remaster of the game for new generations of mobile devices

By 2019, several devices from Apple and Samsung had been released that were quite powerful in terms of graphics, so our company came up with the idea of a significant upgrade of War Robots. We wanted to harness the power of all these new devices and update the visual image of the game.

In addition to the updated image, we also had several requirements for our updated product: we wanted to support a 60 FPS game mode, as well as different qualities of resources for different devices.

The current quality manager also required a rework, as the number of qualities within blocks was growing, which were switched on the fly, reducing productivity, as well as creating a huge number of permutations, each of which had to be tested, which imposed additional costs on the QA team with each release.

The first thing we started with when reworking the client was refactoring shooting. The original implementation depended on the frame rendering speed because the game entity and its visual representation in the client were one and the same object. We decided to separate these entities so that the game entity of the shot was no longer dependent on its visuals, and this achieved fairer play between devices with different frame rates.

The game deals with a large number of shots, but the algorithms for processing the data of these are quite simple – moving, deciding if the enemy was hit, etc. The Entity Component System concept was a perfect fit for organizing the logic of projectile movement in the game, so we decided to stick with it.

In addition, in all our new projects, we were already using ECS to work with logic, and it was time to apply this paradigm to our main project for unification. As a result of the work done, we realized a key requirement – ensuring the ability to run images at 60 FPS. However, not all of the combat code was transferred to ECS, so the potential of this approach could not be fully realized. Ultimately, we didn't improve performance as much as we would have liked, but we didn't degrade it either, which is still an important indicator with such a strong paradigm and architecture shift.

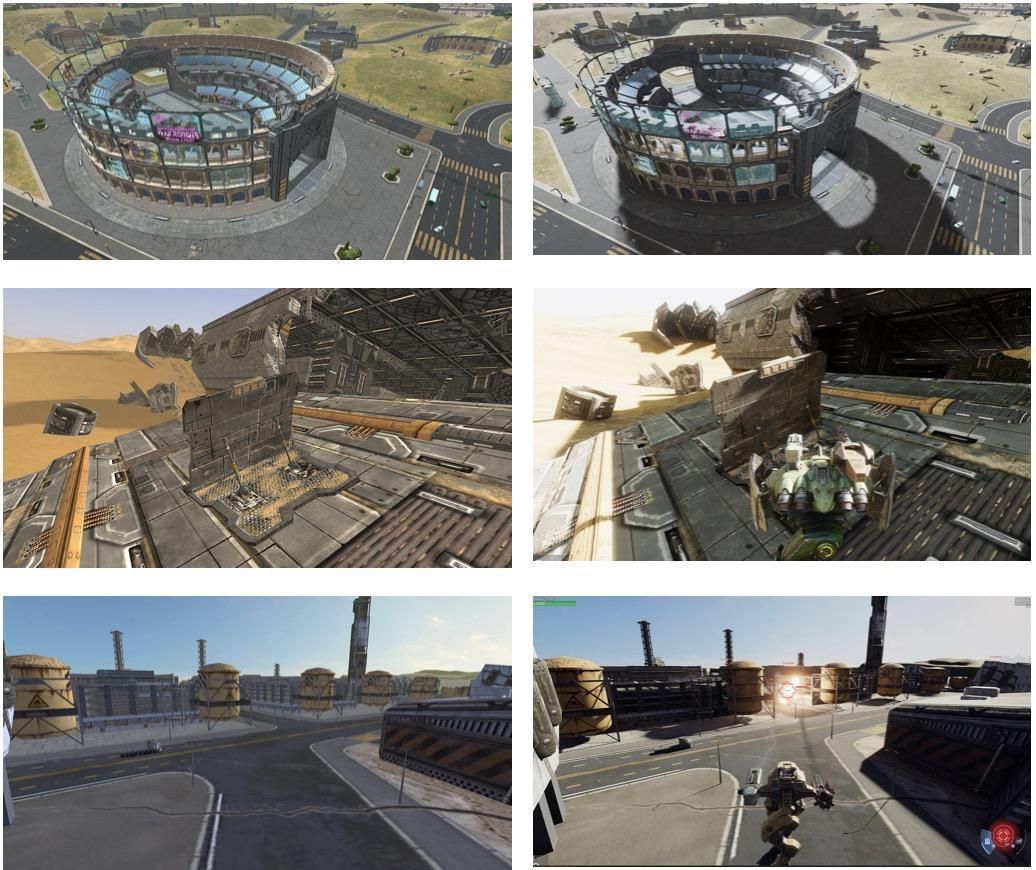

At the same time, we began working on our PC version to see what level of graphics we could achieve with our new graphics stack. It began to leverage Unity SRP, which at that time was still in the preview version and was constantly changing. The picture that came out for the Steam version was quite impressive:

Further, some of the graphical features shown in the image above could be transferred to powerful mobile devices. This was especially for Apple devices, which historically have good performance characteristics, excellent drivers, and a tight combination of hardware and software; this allows them to produce a picture of very high quality without any temporary solutions on our side.

We quickly understood that changing the graphics stack alone would not change the image. It also became obvious that, in addition to powerful devices, we would need content for weaker devices. So, we began planning to develop content for different quality levels.

This meant that mechs, guns, maps, effects, and their textures had to be separated in quality, which translates to different entities for different qualities. That is, the physical models, textures, and set of mech textures that will work in HD quality will differ from mechs that will work in other qualities.

In addition, the game devotes a large amount of resources to maps, and these also need to be remade to comply with the new qualities. It also became apparent that the current Quality Manager did not meet our needs as it did not control asset quality.

So, first, we had to decide what qualities would be available in our game. Taking into account the experience of our current Quality Manager, we realized that in the new version, we wanted several fixed qualities that the user can independently switch in the settings depending on the maximum available quality for a given device.

The new quality system determines the device a user owns and, based on the device model (in the case of iOS) and the GPU model (in the case of Android), allows us to set the maximum available quality for a specific player. In this case, this quality, as well as all previous qualities, is available.

Also, for each quality, there is a setting to switch the maximum FPS between 30 and 60. Initially, we planned to have about five qualities (ULD, LD, MD, HD, UHD). However, during the development process, it became clear that this amount of qualities would take a very long time to develop and would not allow us to reduce the load on QA. With this in mind, ultimately, we ended up with the following qualities in the game: HD, LD, and ULD. (As a reference, the current distribution of the audience playing with these qualities is as follows: HD - 7%, LD - 72%, ULD - 21%.)

As soon as we understood that we needed to implement more qualities, we started working out how to sort assets according to those qualities. To simplify this work, we agreed to the following algorithm: the artists would create maximum quality (HD) assets, and then, using scripts and other automation tools, parts of these assets are simplified and used as assets for other qualities.

In the process of working on this automation system, we developed the following solutions:

- Asset Importer – allows us to set the necessary settings for textures

- Mech Creator – a tool that allows for the creation of several mech versions based on Unity Prefab Variants, as well as quality and texture settings, distributed in the corresponding project folders.

- Texture Array Packer – a tool that allows you to pack textures into texture arrays

- Quality Map Creator – a tool for creating multiple map qualities from a source UHD quality map

A lot of work has been done to refactor existing mech and map resources. The original mechs weren’t designed with different qualities in mind and, therefore, were stored in single prefabs. After refactoring, basic parts were extracted from them, mainly containing their logic, and using Prefab Variants, variants with textures for a specific quality were created. Plus, we added tools that could automatically divide a mech into different qualities, taking into account the hierarchy of texture storage folders depending on the qualities.

In order to deliver specific assets to specific devices, it was necessary to divide all the content in the game into bundles and packs. The Main pack contains the assets necessary to run the game, assets that are used in all capacities, as well as quality packs for each platform: Android_LD, Android_HD, Android_ULD, iOS_LD, iOS_HD, iOS_ULD, and so on.

The division of assets into packs was made possible thanks to the ResourceSystem tool, which was created by our Platform team. This system allowed us to collect assets into separate packages and then embed them into the client or take them separately to upload to external resources, such as a CDN.

To deliver content, we used a new system, also created by the platform team, the so-called DeliverySystem. This system allows you to receive a manifest created by the ResourceSystem and download resources from the specified source. This source could be either a monolithic build, individual APK files, or a remote CDN.

Initially, we planned to use the capabilities of Google Play (Play Asset Delivery) and AppStore (On-Demand Resources) for storing resource packs, but on the Android platform, there were many problems associated with automatic client updates, as well as restrictions on the number of stored resources.

Further, our internal tests showed that a content delivery system with a CDN works best and is most stable, so we abandoned storing resources in stores and began storing them in our cloud.

Releasing the remaster

When the main work on the remaster was completed, it was time for release. However, we quickly understood that the original planned size of 3 GB was a poor download experience despite a number of popular games on the market requiring users to download 5-10 GB of data. Our theory was that the users would be accustomed to this new reality by the time we released our remastered game to the market, but alas.

In other words, players were not used to these kinds of large files for mobile games. We needed to find a solution to this problem quickly. We decided to release a version without HD quality several iterations after this, but we still delivered it to our users later.

In parallel with the development of the remaster, we actively worked to automate project testing. The QA team has done a great job ensuring that the status of the project can be tracked automatically. At the moment, we have servers with virtual machines where the game runs. Using a self-written testing framework, we can press any button in the project with scripts – and the same scripts are used to run various scenarios when testing directly on devices.

We’re continuing to develop this system: hundreds of constantly-running tests have already been written to check for stability, performance, and correct logic execution. The results are displayed in a special dashboard where our QA specialists, together with the developers, can examine in detail the most problematic areas (including real screenshots from the test run).

Prior to putting the current system into operation, regression testing took a lot of time (about a week on the old version of the project), which was also much smaller than the current one in terms of volume and amount of content. But thanks to automatic testing, the current version of the game is tested for only two nights; this can be further improved, but currently, we are limited by the number of devices connected to the system.

Towards the completion of the remaster, the Apple team contacted us and gave us the opportunity to participate in a new product presentation in order to demonstrate the capabilities of the new Apple A14 Bionic chip (released with new iPads in the fall of 2020) using our new graphics. During work on this mini project, a fully working HD version was created, capable of running on Apple chips at 120 FPS. In addition, several graphical improvements were added to demonstrate the power of the new chip.

As a result of a competitive and rather tough selection, our game was included in the autumn presentation of the Apple Event! The entire team gathered to watch and celebrate this event, and it was really cool!

Abandoning Photon and migrating to WorldState architecture.

Even before the launch of the remastered version of the game in 2021, a new task had emerged. Many users had complained about network problems, the root of which was our current solution at the time, the Photon transport layer, which was used to work with Photon Server SDK. Another issue was the presence of a large and unstandardized number of RPCs that were sent to the server in a random order.

In addition, there were also problems with synchronizing worlds and overflowing the message queue at the start of the match, which could cause significant lag.

To remedy the situation, we decided to move the network match stack towards a more conventional model, where communication with the server occurs through packets and receiving game state, not through RPC calls.

The new online match architecture was called WorldState and was intended to remove Photon from gameplay.

In addition to replacing the transport protocol from Photon to UDP based on the LightNetLib library, this new architecture also involved streamlining the client-server communication system.

As a result of working on this feature, we reduced costs on the server side (switching from Windows to Linux and abandoning Photon Server SDK licenses), ended up with a protocol that is much less demanding for user end devices, reducing the number of problems with state desynchronization between the server and the client, and created an opportunity to develop new PvE content.

It would be impossible to change the entire game code overnight, so work on WorldState was divided into several stages.

The first stage was a complete redesign of the communication protocol between the client and the server, and the movement of mechs was shifted to new rails. This allowed us to create a new mode for the game: PvE. Gradually, mechanics began to move to the server, in particular the latest ones (damage and critical damage to mechs). Work on the gradual transfer of old code to WorldState mechanisms continues and we will also have several updates this year.

In 2022, we launched on a new-to-us platform: Facebook Cloud. The concept behind the platform was interesting: running games on emulators in the cloud and streaming them to the browser on smartphones and PCs, without the need for the end player to have a powerful PC or smartphone to run the game; only a stable Internet connection is needed.

From the developer's side, two types of builds can be distributed as the main one that the platform will use: the Android build and the Windows build. We took the first path due to our greater experience with this platform.

To launch our game on Facebook Cloud, we needed to make several modifications, like redoing the authorization in the game and adding cursor control. We also needed to prepare a build with all the built-in resources because the platform did not support CDN, and we needed to configure our integrations, which could not always run correctly on emulators.

A lot of work was also done on the graphics side to ensure the functionality of the graphics stack because Facebook emulators were not real Android devices and had their own characteristics in terms of driver implementation and resource management.

However, we saw that users of this platform encountered many problems – both unstable connections and unstable operation of emulators, and Facebook decided to close their platform down at the beginning of 2024.

What constantly happens on the programmers’ side and which cannot be discussed in detail in such a short article is regular work with the technical risks of the project, regular monitoring of technical metrics, constant work with memory and optimization of resources, searching for problems in third-party solutions of partner SDKs, advertising integrations and much more.

In addition, we continue the research and practical work on fixing critical crashes and ANRs. When a project runs on such a large number of completely different devices, this is inevitable.

Separately, we would like to note the professionalism of the people who work to find the causes of problems. These technical problems are often complex in nature and it’s necessary to superimpose data from several analytical systems on top of each other, as well as to conduct some non-trivial experiments before the cause is found. Frequently, most complex problems do not have a consistently reproducible case that can be tested, so empirical data and our professional experience are often used to fix them.

A few words should be said about the tools and resources that we use to find problems in a project.

- AppMetr – an internal analytics solution that allows you to collect anonymized data from devices to study player problems. Some of the similar tools that might be better known to the reader are: Firebase Analytics, Google Analytics, Flurry, GameAnalytics, devtodev, AppsFlyer, etc.

- Google Firebase Crashlytics

- Google Play Console – Android Vitals

- Logs – a system of logs in debug builds, allowing you to minimize the search for problems in playtests within the studio

- Public Test Realm – our game has public test servers that allow you to test new changes and conduct various technical experiments

- Unity Profiler

- XCode Instruments

This is just a small list of technical improvements that the project has undergone during its existence. It’s difficult to list everything that has been accomplished since the project is constantly developing, and technical managers are constantly working on drawing up and implementing plans to improve the product’s performance both for end users and for those who work with it inside the studio, including the developers themselves and other departments.

We still have many improvements that the product will have in the next decade, and we hope that we will be able to share these in our next articles.

Happy birthday, War Robots, and thanks to the huge team of technical specialists who make all of this possible!