How memory, reasoning, and action-based learning transform AI agents from language processors to practical collaborators.

Introduction

A language model might be able to write an eloquent poem about a flower or generate instructions on how to plant one. However, ask it to physically plant a flower, and you’ll be met with silence. This stark contrast highlights the limitations of AI agents that are brilliant with language yet disconnected from the physical world.

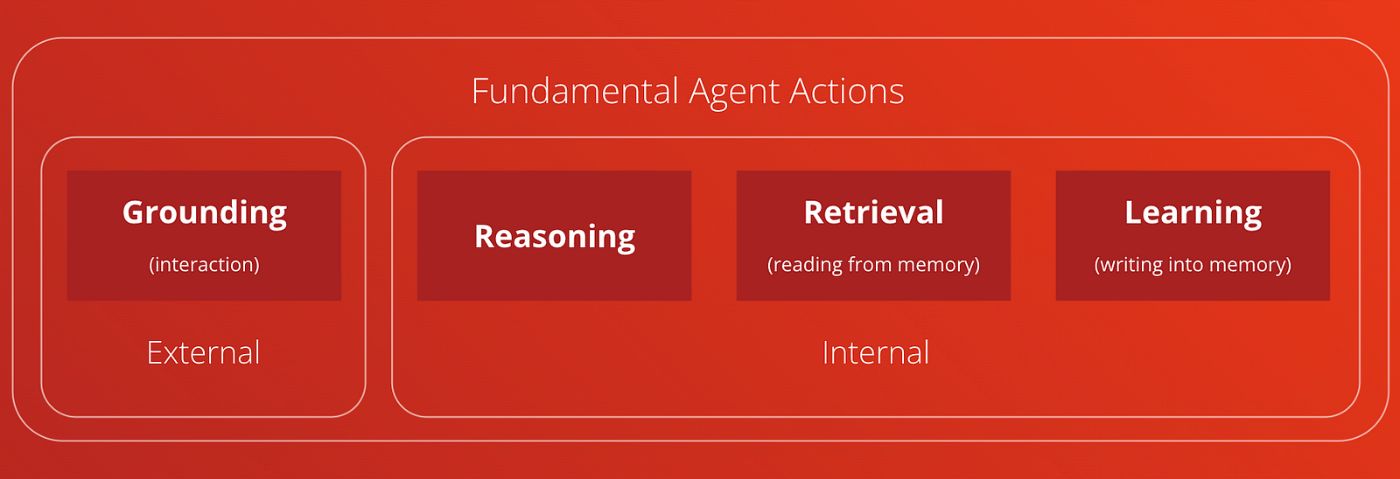

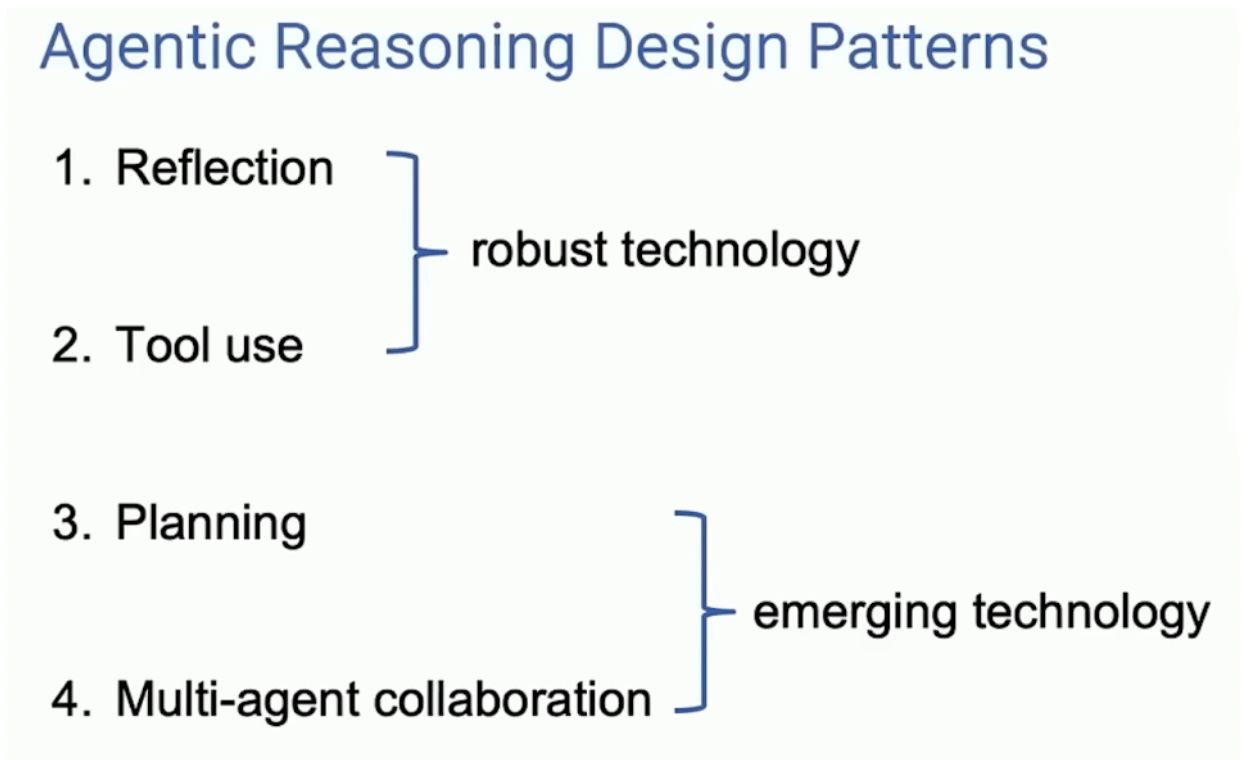

Researchers are addressing this limitation by exploring ways to ground AI agents in reality, integrating memory, reasoning, and action-based learning.

LLMs have achieved remarkable strides in natural language understanding and generation. Their fluency can make it seem as if they possess genuine comprehension, but this is often a facade. These powerful models lack a fundamental connection to the physical world, limiting their ability to carry out actions, follow complex instructions, and fully engage with their environment. This disconnect between language and action severely hampers the potential practical applications of AI agents.

To bridge this gap, researchers are exploring ways to ground AI agents in the real world. Grounding is the process of anchoring language to actions, perceptions, and embodied experiences. This article discusses recent advancements in grounding language models, exploring how the addition of memory, reasoning, and action-based learning empowers AI agents to transition from mere text processors to transformative tools with significant real-world impact.

Memory and Reasoning for AI Agents

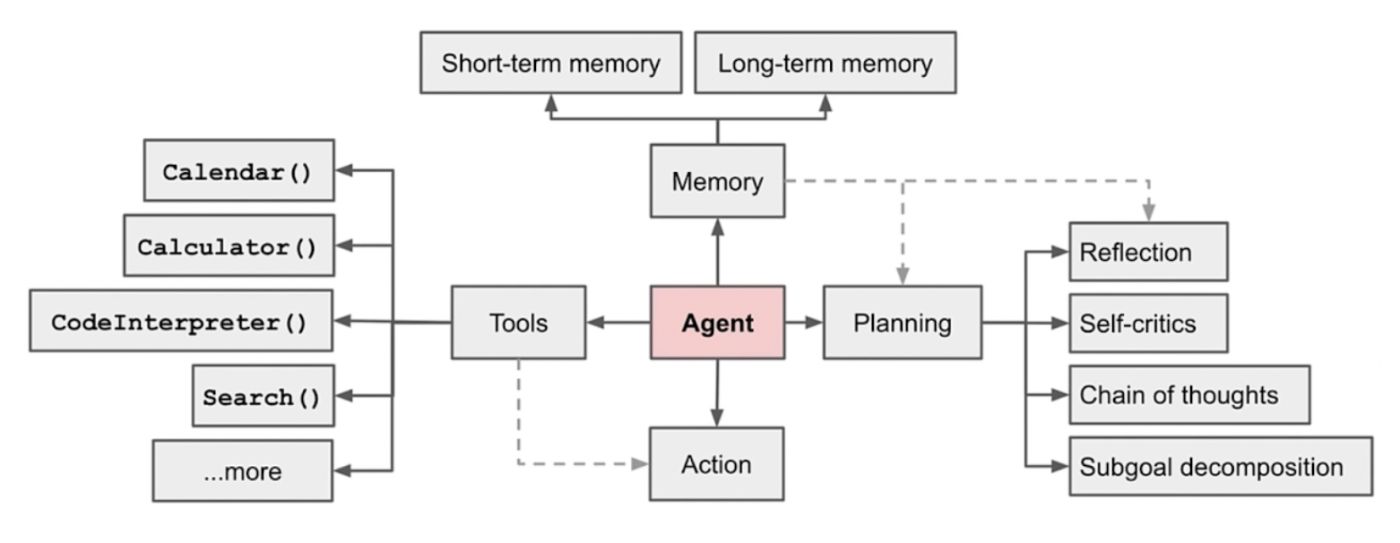

Traditional large language models often struggle with maintaining long-term context and applying their knowledge in a practical setting. Research is addressing this limitation with the integration of different memory mechanisms into these models. This exploration suggests several types of memory can be essential for AI agents:

- Short-term Memory: Allows agents to store information pertinent to the current task, improving their focus and generating contextually relevant output.

-

Long-term Memory: Stores a wealth of knowledge including facts, experiences, and procedures. Accessing this knowledge enables agents to draw connections, make inferences, and adapt to new situations with greater understanding.

Additionally, the ReAct paper (Yao et al., 2022b) highlights the importance of reasoning abilities for AI agents. Enabling language models with internal thought processes enhances decision-making. This lets them understand complex instructions, weigh potential outcomes, and select actions that align with their objectives and knowledge.

Memory and reasoning are intertwined facets that boost the abilities of AI agents. Memory allows information retention while reasoning empowers agents to interpret that information and act in a way that’s grounded in the real world.

Grounding Language in Actions for Real-World Tasks

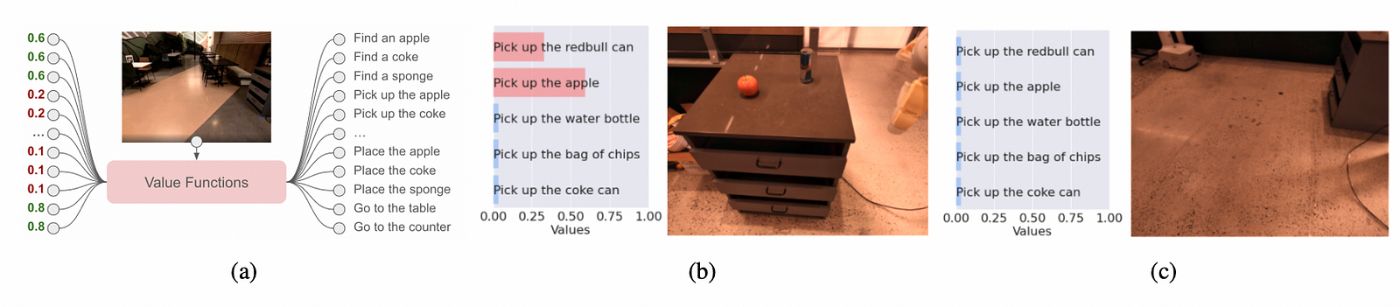

Bridging the gap between language and action is essential for AI agents to move beyond simple conversation and truly impact the world around them. Grounding means equipping AI models with the ability to understand our instructions and to determine what actions are both linguistically plausible and feasible within their environment. Let’s explore how this works in different contexts:

Robotic Systems: Imagine a warehouse robot tasked with “retrieving the blue box from the top shelf.” A grounded AI agent would need to:

- Skills Inventory: Possess a repertoire of actions it can perform, like moving, grasping objects, and navigating its surroundings.

-

Success Evaluation: Be able to assess the likelihood of success for each action based on factors like sensor data and its knowledge of the environment (e.g., can it reach the shelf, is the box too heavy?).

Virtual Assistants: Grounding is equally relevant within digital environments. Consider an AI assistant helping you with spreadsheet analysis. An instruction like “find the average sales figures, excluding outliers” necessitates:

- Internal Knowledge: Understanding data manipulation concepts like averages and outlier detection.

-

Action Selection: Knowing how to translate those concepts into actions within the software environment (e.g., selecting data filters, and applying formulas).

Hybrid Systems: An intriguing use case is when an AI agent acts as an intermediary between language and an existing interface. It might help a user control a complex piece of machinery via voice commands despite lacking direct access to its hardware controls. Here, the agent would need to:

- Interface Awareness: Understand the existing controls and functionalities of the machinery.

- Action Mapping: Map user instructions (“increase pressure”) to the appropriate commands within the interface (e.g., turning a knob).

Key Considerations in Grounding

Several factors influence the way AI agents ground their understanding of language:

- Environment: Is the agent operating primarily in the physical world (robot), a software environment, or a combination of both?

- Action Types: Are the available actions pre-programmed (a robot with specific motions) or learned more organically through real-world interaction?

-

Context-Awareness: A grounded AI’s success often depends on how well it understands its surroundings, its own abilities, and the constraints it must work within.

Grounding AI agents allows them to become more than just language processors. They gain the ability to execute tasks, learn through interactions with their environment, and become genuine collaborators in both physical and digital spaces.

Real-World Applications in Knowledge Work

Grounding AI agents have tremendous potential to transform how we conduct research, handle data, and make complex decisions. Let’s focus on a few key areas where these agents could provide significant value:

- AI Research Assistants: Imagine an AI agent capable of not just retrieving research papers but understanding the nuances of your field. It could summarize findings, identify potential knowledge gaps, suggest connections to other lines of research, or even proactively point out limitations in your methodology.

- Data-Driven Analysis: A grounded AI agent could go far beyond simple visualizations. Imagine asking, “Show me correlations between customer feedback trends and sales data over time,” and receiving insights that factor in known biases and anomalies in your datasets.

- Financial Market Insights: A grounded AI assistant could help you make informed decisions by asking questions like, “How have geopolitical events historically impacted similar assets?” or “Model different portfolio scenarios based on projected market volatility.”

-

Task Automation and Optimization: Many knowledge work processes involve repetitive tasks or follow predictable patterns. AI agents, able to understand instructions and execute corresponding actions, could automate report generation, streamline data preparation, or proactively suggest enhancements to your workflows.

In knowledge-intensive fields, grounded AI could become a powerful extension of our own capabilities, saving time, uncovering deeper insights, and improving decision-making.

Challenges and Future Directions

While grounding AI agents holds immense promise, there are significant challenges to further development and wider adoption:

-

Data and Context: Real-world actions are deeply intertwined with context. While an agent might learn to “turn on a light,” the specifics of what lights exist, their controls, and the current room state require a rich understanding that’s difficult to capture in traditional datasets.

-

Generalization: It’s relatively easy to train an agent to perform a specific, well-defined task. Generalizing an agent’s understanding to adapt to new situations, unseen tasks, and changing environments remains an open research problem.

- Bias and Explainability: Like any AI system, grounded agents are susceptible to reflecting biases in their training data. Additionally, understanding the rationale behind an agent’s decisions, particularly those involving complex reasoning, becomes crucial for trust and responsible use.

Research into overcoming these hurdles will drive future breakthroughs in grounded AI. We could see:

- Self-Learning Agents: Agents that actively explore their environment, learning through trial and error, may acquire a better grounding in real-world tasks and limitations.

- Meta-Learning for Adaptation: Techniques that allow AI agents to ‘learn how to learn’ could enable them to adapt to new tasks and scenarios more quickly and effectively.

- Transparent Reasoning: Methods for making an agent’s ‘thought process’ explainable will be crucial for building trust and ensuring ethical use of these powerful tools.

Despite the challenges ahead, the potential for grounded AI agents to revolutionize how we interact with and utilize intelligent systems remains undeniable. Continued research in this area will unlock AI’s full potential as a practical and impactful tool.

Conclusion

Large language models have revolutionized the capabilities of AI systems, but their lack of real-world grounding limits their practical usefulness. Research into memory, reasoning, and bridging language models to actions is beginning to address this significant limitation. The SayCan model and other similar developments demonstrate how AI agents can learn to understand instructions and perform actions that are both linguistically and physically feasible.

This grounding of AI opens up numerous possibilities in robotics, software assistance, and knowledge-driven fields. While challenges remain in terms of data, generalization, and transparency, advancements in grounded AI will fundamentally transform how we interact with and benefit from these intelligent systems.

From Information to Collaboration

The most significant impact of grounding lies in shifting our interactions with AI from passive information retrieval to active collaboration. As AI agents become increasingly capable of understanding our intent, interpreting complex instructions, and acting within the constraints of the real world, they transition from mere tools into partners.

We can envision a future where we work alongside AI assistants who augment our decision-making, seamlessly execute tasks on our behalf, and proactively suggest solutions based on a deep understanding of our goals and context.

Final Thoughts

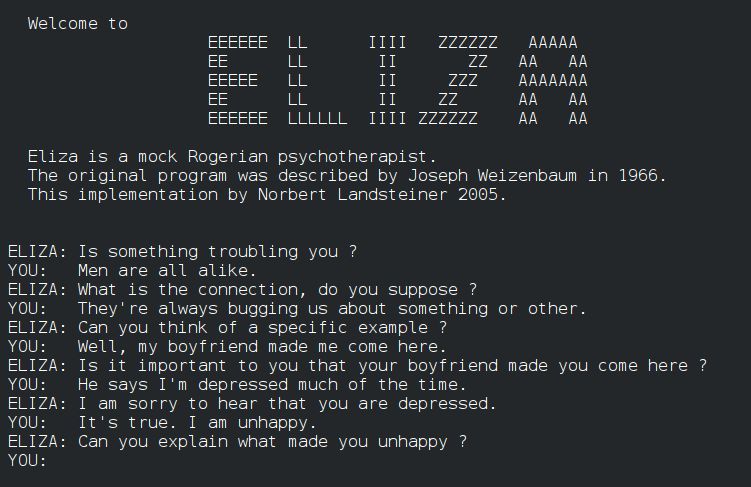

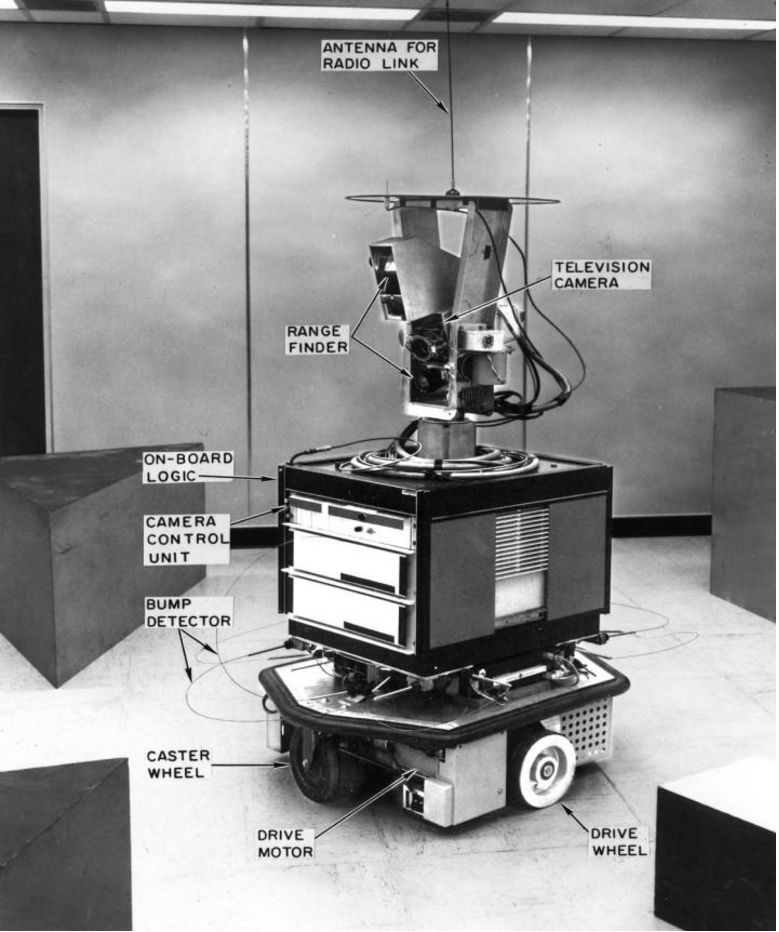

The integration of grounding in AI agents builds upon the foundations laid by earlier systems like ELIZA, SHRDLU, and Shakey the robot. Projects like ChatDev and Cognition Labs’ Devin illustrate the current efforts to bring grounded AI to various domains. As grounded AI agents become more sophisticated, they hold the potential to revolutionize how we work, learn, and interact with the world around us.